Security teams do not get to choose when incidents happen. Friday night disclosures, weekend breaches, critical CVEs that land at 11pm — defenders work on the attacker’s schedule, not their own. Most of them also work with significantly fewer resources than the organizations they are protecting against. That asymmetry is exactly what OpenAI is trying to address.

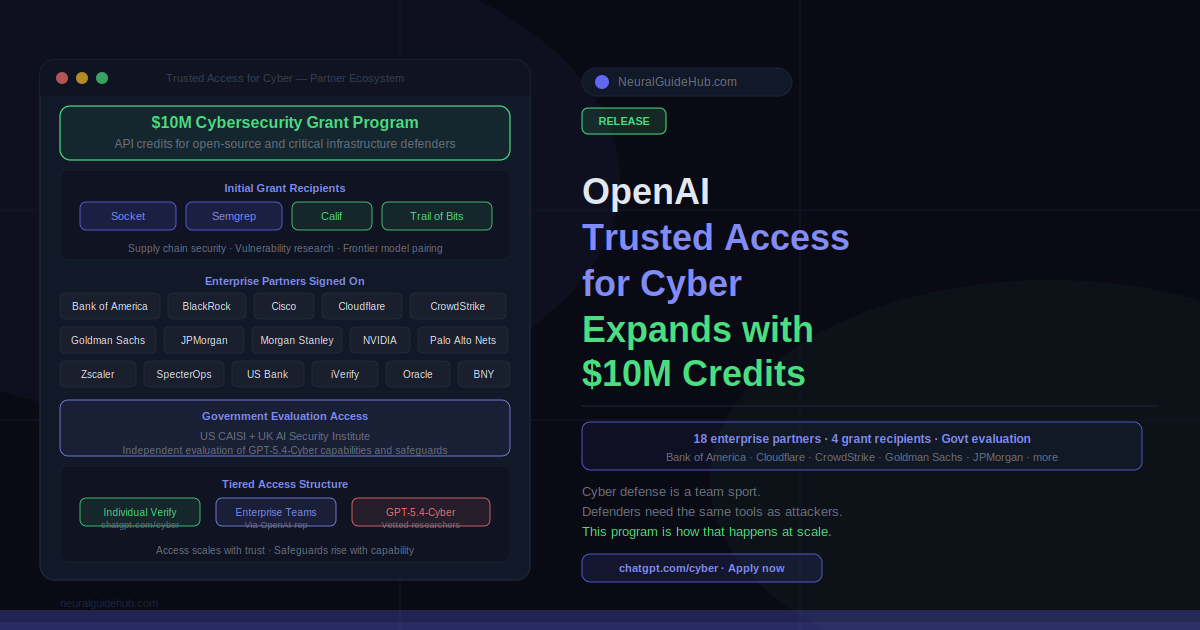

Trusted Access for Cyber is OpenAI’s framework for getting advanced AI capabilities to defenders at scale, with access tiered by trust, validation, and safeguards. Today the program expanded significantly: new enterprise partners across banking, security, and critical infrastructure signed on, $10 million in API credits launched through the Cybersecurity Grant Program, and GPT-5.4-Cyber access extended to government AI safety institutes for independent evaluation.

Who Is Joining Trusted Access for Cyber

The list of organizations that have already signed up reads like a cross-section of the institutions responsible for keeping global digital infrastructure running. Bank of America, BlackRock, BNY, Citi, Cisco, Cloudflare, CrowdStrike, Goldman Sachs, iVerify, JPMorgan Chase, Morgan Stanley, NVIDIA, Oracle, Palo Alto Networks, SpecterOps, US Bank, and Zscaler are all participating.

That is not a typical beta program list. These are organizations that operate some of the most complex and heavily targeted digital environments on earth. Their participation is not just about access — it is about generating real-world data on how frontier AI capabilities perform in actual defensive workflows, which OpenAI needs to improve the safety systems around these tools.

The approach is deliberate. OpenAI is building the trust, verification, and accountability infrastructure that lets them expand access responsibly. Each participating organization represents a different threat surface, a different set of defensive workflows, and a different category of data about what works and what does not.

The $10M Cybersecurity Grant Program

Not every security team has a 24/7 incident response capability. A lot of the work keeping open source software and critical infrastructure secure happens in small teams, research organizations, and nonprofits that operate with tight budgets and no enterprise security stack behind them.

The $10 million in API credits through the Cybersecurity Grant Program is aimed directly at that part of the ecosystem. Initial recipients include Socket and Semgrep, which focus on software supply chain security, and Calif and Trail of Bits, which pair frontier models with vulnerability research expertise.

OpenAI is actively looking to expand this cohort. The target profile is teams with a proven track record of identifying and remediating vulnerabilities in open source software and critical infrastructure — not teams pitching ideas, but teams that have already demonstrated they can find and fix real problems. Applications are open.

GPT-5.4-Cyber Goes to Government Safety Institutes

OpenAI also provided GPT-5.4-Cyber access to the U.S. Center for AI Standards and Innovation (CAISI) and the UK AI Security Institute (UK AISI) for independent evaluation of the model’s cyber capabilities and safeguards.

This matters more than it might appear. Independent government evaluation of a model specifically fine-tuned for cybersecurity work is a meaningful accountability step. It is not OpenAI evaluating its own model — it is external institutions with a mandate to assess AI safety running their own assessments on a more permissive model variant. The results of those evaluations will shape how similar models are handled going forward.

The Logic Behind Tiered Access

The core premise of Trusted Access for Cyber is that cyber capabilities are inherently dual-use, so access should scale with evidence of legitimate use rather than being either fully open or fully restricted. That means identity verification at the individual level, organizational validation at the enterprise level, and government institute evaluation at the model capability level.

The breadth of partners reflects a deliberate strategy. Cybersecurity is not a problem that gets solved by equipping one category of defender. The same vulnerability can be discovered by a solo researcher, patched by an open source maintainer, and exploited against a major bank before any of those three actors know the others exist. Effective defense requires all of them to have access to comparable capabilities.

OpenAI is explicit that this program will keep expanding. Safeguards will rise with capability. Access pathways will develop as the verification infrastructure matures. The goal is not a fixed program but a system that scales trust alongside capability — so that as models become more powerful, the defenders using them stay ahead of the attackers trying to use those same capabilities against them.

Individual cybersecurity professionals can verify their identity at chatgpt.com/cyber. Organizations interested in enterprise-level access can reach out through their OpenAI representative. Teams working on open source security and critical infrastructure can apply to the grant program through OpenAI’s website.

https://openai.com/index/accelerating-cyber-defense-ecosystem/