The cybersecurity industry has been waiting for this kind of move. OpenAI just unveiled OpenAI Daybreak, a vision and a product line aimed at putting frontier AI capabilities directly into the hands of cyber defenders. Not theoretical AI safety. Not another threat-intel feed. Actual reasoning models, plugged into the defensive workflow, designed to find vulnerabilities, validate patches, and make software resilient from the moment it’s written.

I’ve watched this space for years. Most “AI for security” launches end up being marketing wrappers around basic pattern matching. Daybreak looks structurally different. The pitch is straightforward. Pair OpenAI’s strongest models with the Codex agentic harness, then build verified-access tiers that let defenders use capabilities responsibly. Here’s what I’m seeing in the announcement and why it matters.

What Daybreak actually is

The name comes from the imagery of sunrise. The first light that lets you see risks earlier and act faster. Marketing aside, Daybreak is OpenAI’s attempt to reframe how software gets defended. The premise is that the next era of cyber defense should be built into software from the start, not bolted on after the fact.

The product combines three things. The intelligence of frontier models like GPT-5.5. The extensibility of Codex acting as an agentic harness inside repositories. A partner network across the security industry that handles deployment, validation, and integration. None of these alone are new. The combination is what changes the math.

What defenders get is the ability to bring secure code review, threat modeling, patch validation, dependency risk analysis, and remediation guidance directly into the everyday development loop. Software becomes more resilient from line one, not from the post-incident retrospective.

The trusted access model

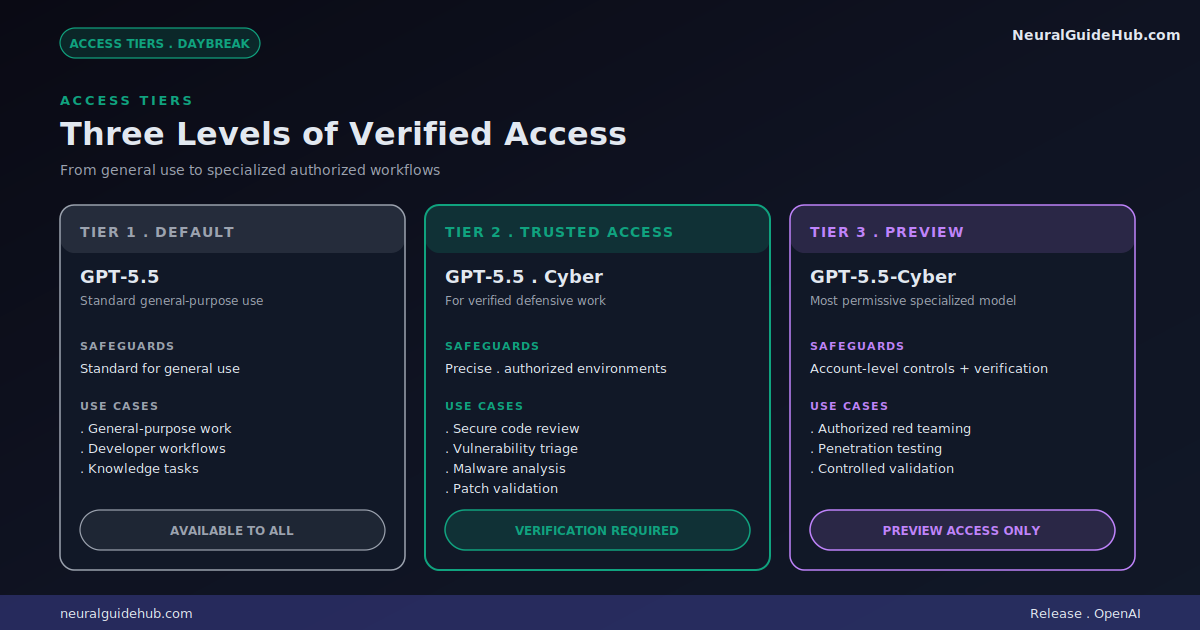

This is the part I find most interesting. OpenAI knows that any AI capable enough to help defenders is also capable enough to help attackers. So Daybreak ships with three access tiers, each with different safeguards.

The default tier is GPT-5.5 with standard safeguards. General-purpose use, developer work, knowledge tasks. Same model behavior most users already see.

The middle tier is GPT-5.5 with Trusted Access for Cyber. More precise safeguards specifically for verified defensive work in authorized environments. This is for the bulk of defensive workflows: secure code review, vulnerability triage, malware analysis, detection engineering, and patch validation.

The top tier is GPT-5.5-Cyber. The most permissive model behavior, paired with stronger verification and account-level controls. Preview access only, intended for authorized red teaming, penetration testing, and controlled validation. The kind of work where you actually need the model to think like an attacker to defend against one.

Where Codex Security fits in

The technical core of Daybreak runs through Codex Security. The way OpenAI describes it, Codex builds an editable threat model directly from your repository. Then it focuses analysis on realistic attack paths and the code that matters most. Not surfacing every theoretical issue. Prioritizing what’s actually exploitable.

Three workflows stand out from the announcement. First, find and fix vulnerabilities through repository-aware reasoning. Second, burn down the existing security backlog instead of accumulating more. Third, automate detection and response across systems. Each one targets a different part of the security lifecycle, but all three feed back into the same loop.

The framing is “the security flywheel.” More vulnerabilities found means better threat models. Better threat models mean faster patches. Faster patches mean defenders catch up to attackers instead of chasing them. AI accelerates each step.

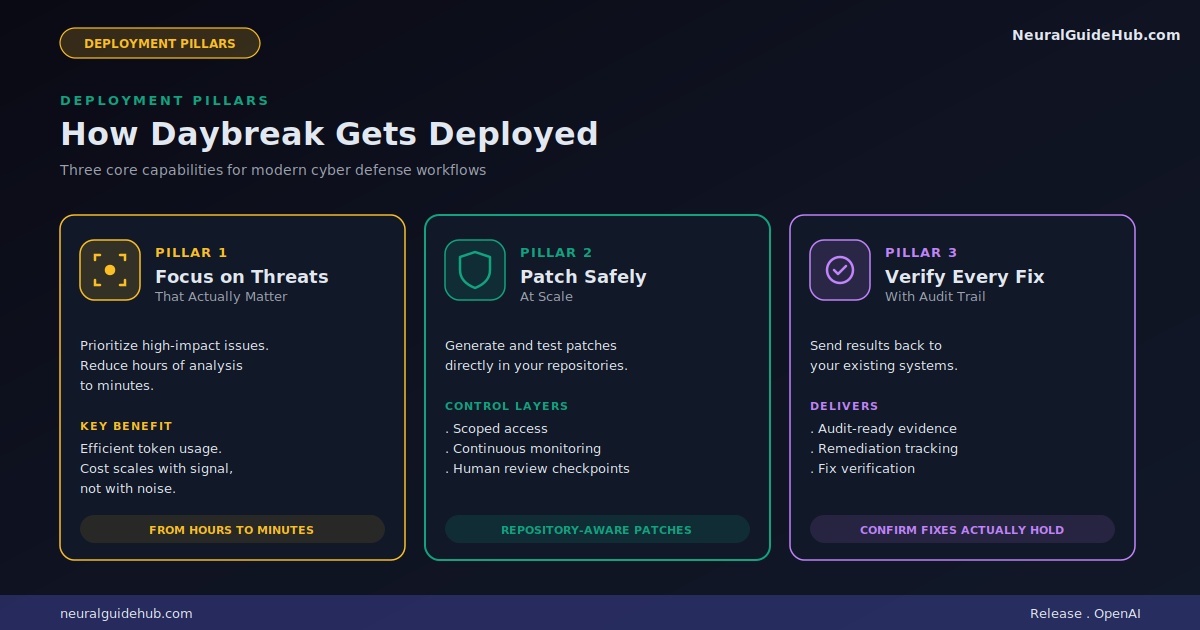

Three pillars of deployment

OpenAI outlines three core capabilities for how Daybreak gets deployed in real defensive work.

The first pillar is focus on the threats that matter. Prioritize high-impact issues. Reduce hours of human analysis down to minutes. Use tokens efficiently so cost scales with what’s worth investigating, not with noise.

The second pillar is patch safely at scale. Generate and test patches directly in your repositories, with scoped access, monitoring, and human review checkpoints. The point is making remediation tractable on the kind of large codebases where manual patching falls behind by definition.

The third pillar is verify every fix. Send results and audit-ready evidence back into the systems your team already uses. Track remediation. Confirm that fixes actually held. This is the part most “AI security” tools skip, and it’s the part defenders care about most.

Why this announcement matters

The defensive side of cybersecurity has been losing ground for years. Attackers automated their tooling first. Defenders have been catching up with AI more slowly, partly because the risk profile is harder. Anything that helps a defender automate vulnerability discovery could, in theory, help an attacker do the same thing faster.

What I read in the OpenAI Daybreak announcement is an attempt to solve that tension structurally. The trusted access tiers are the proof. OpenAI isn’t just shipping more capability and hoping it gets used well. They’re tying capability to verification, scoping permissive behavior to authorized environments, and adding account-level controls on the most powerful tier.

The honest part of the pitch I appreciate is that they acknowledge this is iterative. The announcement says they’re working with industry and government partners over the coming weeks to deploy increasingly cyber-capable models. Not a one-shot launch. Not “trust us, the model is safe.” A staged rollout with checkpoints. That’s the right shape for something this consequential.

What I’d be watching for next

A few open questions for anyone in security thinking about whether Daybreak is worth evaluating.

How does pricing work for Trusted Access for Cyber? OpenAI hasn’t published specifics yet. For most defensive teams, the difference between “interesting demo” and “actually deployable” is whether the cost matches the cycle time gains.

How fast does verification happen? The middle tier requires verified defensive work in authorized environments. If verification takes weeks, that limits who can actually use this on real timelines.

What does the partner ecosystem look like? OpenAI talks about partners across the security flywheel without naming many. The integrations that matter most, like SIEM, SOAR, code hosting platforms, and ticketing systems, will determine how friction-free the daily workflow becomes.

Where to go from here

If your team is interested, OpenAI is taking direct contact for both vulnerability scans and sales conversations. The middle tier is the one most defensive teams will want to evaluate first. Standard general use stays on default GPT-5.5. Specialized red teaming work needs to go through the preview access pathway for GPT-5.5-Cyber.

The broader story is that frontier AI is now being explicitly aimed at cyber defense as a product category, not just a research direction. OpenAI Daybreak is the most coherent attempt I’ve seen so far at making that shift official. Whether it actually moves the needle for defenders depends on execution over the next twelve months, but the foundations look right.

For anyone running a security team that’s been watching AI from the sidelines, this is the announcement that probably ends that wait. Worth a serious look.