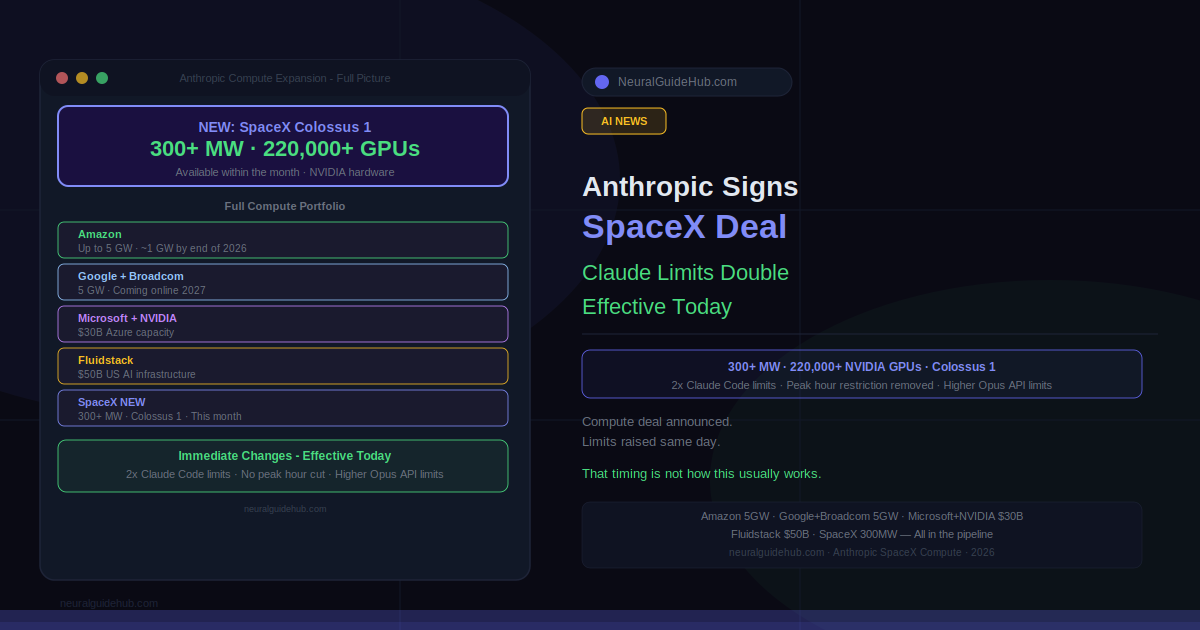

Compute constraints have been the unglamorous backstory behind Claude’s rate limits for a while. Not a secret — Anthropic has been transparent about the tradeoff between capacity and access. The Anthropic SpaceX compute deal announced today is the most direct response to that yet: full access to SpaceX’s Colossus 1 data center, over 300 megawatts of capacity, more than 220,000 NVIDIA GPUs, available within the month. And unlike most infrastructure announcements that take quarters to show up in user experience, this one comes with immediate changes to Claude’s usage limits effective today.

What Changed for Claude Users Right Now

Three things, all live today.

Claude Code’s five-hour rate limits are doubling for Pro, Max, Team, and seat-based Enterprise plans. That’s a meaningful change for anyone who’s hit the ceiling mid-session and had to wait. The peak hours limit reduction on Claude Code is gone for Pro and Max accounts. And API rate limits for Claude Opus models are going up considerably — specific numbers in the table Anthropic published alongside this announcement.

The timing matters. Anthropic isn’t announcing capacity and saying limits will improve eventually. The capacity came online and the limits moved the same day. That’s worth noting because it’s not how these announcements usually work.

The SpaceX Deal in Detail

Anthropic has signed an agreement to use all of the compute capacity at SpaceX’s Colossus 1 data center. Over 300 megawatts. More than 220,000 NVIDIA GPUs. Coming online within the month. This goes directly to capacity for Claude Pro and Max subscribers.

The orbital piece is the headline-grabbing part: alongside this agreement, Anthropic has expressed interest in partnering with SpaceX to develop multiple gigawatts of orbital AI compute capacity. That’s further out and more speculative, but it signals where the relationship might go.

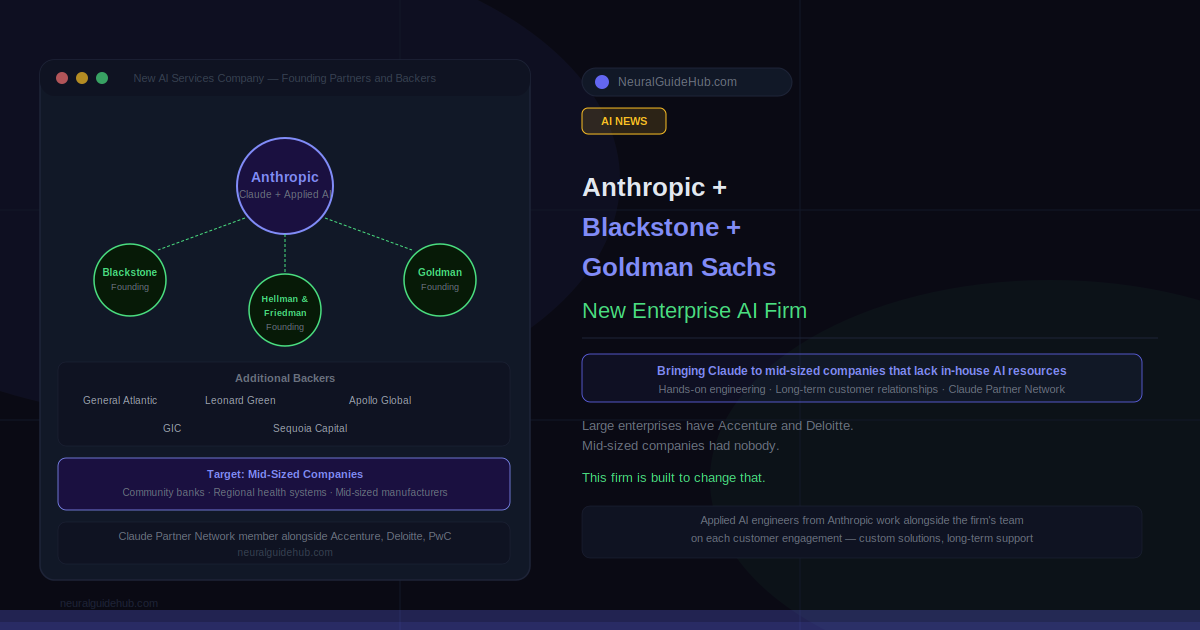

Anthropic SpaceX in Context: The Full Compute Picture

This isn’t a standalone deal. Anthropic has been assembling compute capacity aggressively across multiple partners. The full list now includes a deal with Amazon for up to 5 gigawatts — nearly 1 GW online by end of 2026. A 5 GW agreement with Google and Broadcom starting in 2027. A strategic partnership with Microsoft and NVIDIA covering $30 billion of Azure capacity. And a $50 billion investment in American AI infrastructure with Fluidstack.

Add SpaceX’s 300+ megawatts and you’re looking at a company that has committed to an extraordinary amount of compute across a short window. The hardware runs across AWS Trainium, Google TPUs, and NVIDIA GPUs. No single vendor dependency — which is either a redundancy strategy or a hedge, depending on how you read it.

International Expansion and Where New Capacity Is Going

Enterprise customers in financial services, healthcare, and government need compute that stays in their region. Data residency requirements, compliance frameworks, regulatory mandates — these are real constraints that limit where an enterprise can run AI workloads. Anthropic’s Amazon collaboration already includes additional inference capacity in Asia and Europe to address this.

The geographic selection criteria Anthropic laid out is specific: democratic countries, legal frameworks that support infrastructure investments at this scale, and secure supply chains covering hardware, networking, and facilities. That framing is deliberate. It signals where they won’t expand as much as where they will.

There’s also a community angle. Anthropic committed earlier to covering any consumer electricity price increases caused by their US data centers. They’re exploring extending that commitment internationally and investing back into host communities. Whether that plays out in practice depends on how the expansion unfolds, but the stated intent is notable at a time when data center energy consumption is a legitimate public concern in multiple jurisdictions.

What This Means Practically

For Claude Code users on Pro and Max, the doubled rate limits and removed peak-hour restrictions are the immediate change. For API developers building on Opus models, the raised rate limits remove a ceiling that was constraining production deployments for some teams. For enterprise customers in regulated industries, the international infrastructure expansion addresses a compliance blocker that’s been limiting adoption in certain markets.

The broader picture is a company moving fast on infrastructure while trying to keep user experience in step with capacity. The fact that today’s limit increases came alongside today’s capacity announcement rather than months later suggests they’re managing that coordination better than they were a year ago. The real test will be whether the Colossus 1 capacity actually translates to stable, improved performance at scale over the next few weeks — not just on announcement day.