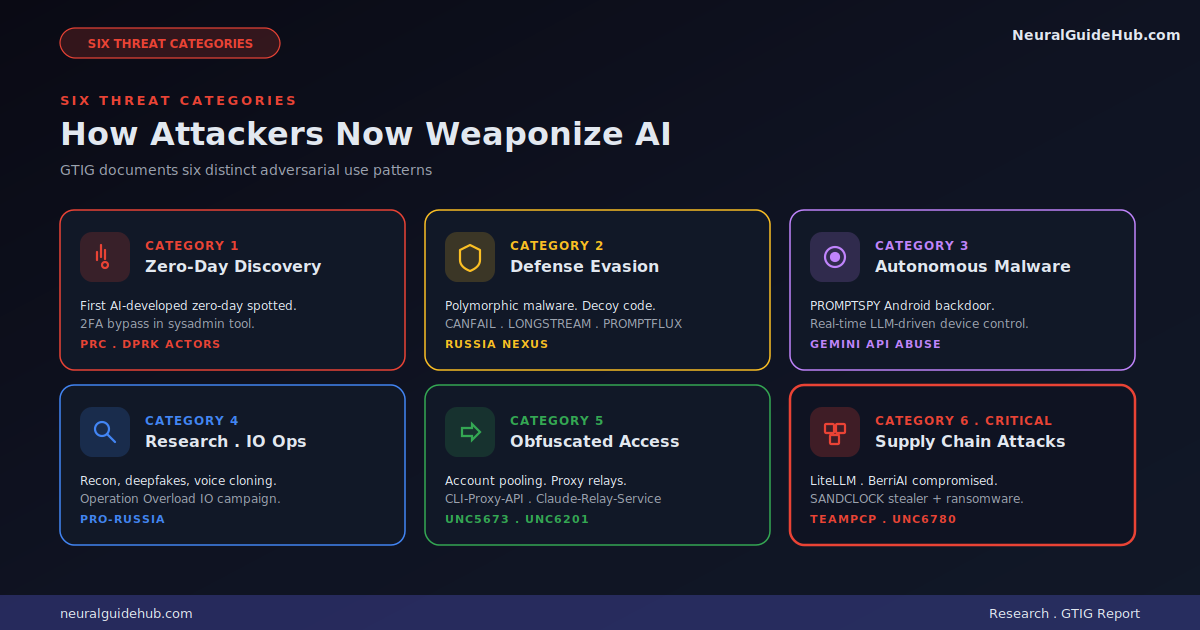

Google’s Threat Intelligence Group just published its latest tracker on how adversaries are using AI in real-world operations. I’ve read through the full report and the shift it describes is significant. The GTIG AI threat report documents the transition from nascent experimentation to industrial-scale application of generative models inside adversarial workflows. This isn’t theoretical anymore. State-sponsored actors and cyber crime groups are now using AI for vulnerability discovery, autonomous malware operations, and supply chain attacks against AI infrastructure itself.

I’ve been tracking this space since the first wave of LLM-assisted phishing in late 2023. The maturity gap between that era and what GTIG describes now is the story. This isn’t ChatGPT writing better phishing emails. This is zero-days developed with AI assistance, autonomous Android malware that interprets system states through the Gemini API, and supply chain attacks targeting AI components like LiteLLM. Here’s what stands out and why defenders should pay attention.

The headline finding: AI-developed zero-day

For the first time, GTIG identified a threat actor using a zero-day exploit they believe was developed with AI assistance. The vulnerability was a 2FA bypass in a popular open-source system administration tool. The criminal group planned to use it in a mass exploitation event. Google’s proactive counter-discovery may have prevented deployment.

What makes this technically interesting is the type of flaw involved. It wasn’t a memory corruption bug or an input sanitization error. It was a high-level semantic logic flaw where the developer hardcoded a trust assumption. Traditional fuzzers and static analysis tools are tuned to find sinks and crashes. They struggle with semantic logic.

Frontier LLMs are different. They can read developer intent and correlate enforcement logic with hardcoded exceptions. That’s a meaningful capability shift. Dormant logic errors that look functionally correct to scanners but are strategically broken from a security perspective become discoverable.

The report also flags this Python script as having LLM fingerprints. Heavy educational docstrings. A hallucinated CVSS score. Textbook Pythonic structure with detailed help menus. The signature of training data leaking into output.

State actors are scaling vulnerability research

The report calls out particular activity from clusters associated with the People’s Republic of China and the Democratic People’s Republic of Korea. These actors are running sophisticated workflows that go far beyond basic prompting.

One specific example: a Chinese threat actor experimented with a vulnerability repository called “wooyun-legacy” hosted on GitHub. The project is a Claude code skill plugin that integrates a distilled knowledge base of over 85,000 real-world vulnerability cases collected by the Chinese bug bounty platform WooYun between 2010 and 2016. By priming the model with this dataset, the actor steers code analysis toward the kinds of logic flaws an expert auditor would prioritize.

APT45 is sending thousands of repetitive prompts to recursively analyze CVEs and validate proof-of-concept exploits. That kind of scale would be impractical without AI. Without it, you’d need a team of analysts running for weeks. With it, you run the work in days.

UNC2814 has been using persona-driven jailbreaking, telling Gemini it’s a “senior security auditor” or “C/C++ binary security expert” investigating TP-Link firmware and OFTP protocol implementations. The persona framing is a soft prompt injection technique. Not always successful, but effective enough that defenders should treat these prompts as a known abuse pattern.

PROMPTSPY changes the malware game

PROMPTSPY is the part of the report that genuinely surprised me. It’s an Android backdoor that uses the Gemini API for autonomous device interaction. Not “calls AI for one task.” Actual real-time decision making about how to manipulate the victim’s device.

The technical architecture is the interesting part. PROMPTSPY contains a module called “GeminiAutomationAgent” with a hardcoded prompt. The module serializes the visible Android UI hierarchy into XML-like format through the Accessibility API. It sends that payload to gemini-2.5-flash-lite via an HTTP POST request in JSON mode. The model returns a structured response with action types and spatial coordinates. The malware parses those into simulated physical gestures: clicks, swipes, screen interactions.

That’s a complete shift from how Android backdoors traditionally work. Older malware relies on hardcoded routines and human operators. PROMPTSPY can independently navigate UIs it has never seen before. It can capture biometric data and replay authentication gestures. It uses an invisible overlay to intercept uninstall attempts.

Malware obfuscation gets smarter

GTIG flags four malware families with LLM-enabled obfuscation: PROMPTFLUX, HONESTCUE, CANFAIL, and LONGSTREAM. Each uses AI differently but the goal is the same. Make detection harder.

PROMPTFLUX uses the Gemini API to generate code variants dynamically. HONESTCUE requests specific VBScript obfuscation techniques on the fly. CANFAIL and LONGSTREAM, which the report links to Russia-nexus actors, take a different approach. They use LLM-generated decoy code that looks legitimate but does nothing.

The CANFAIL analysis is the cleanest example. The malware’s source code contains LLM-style comments explaining what the decoy logic does. The threat actor essentially asked the model to generate inert filler code, and the model dutifully documented its filler. LONGSTREAM has 32 instances of querying the system’s daylight saving status. Repetitive enough to look like routine administrative checks. Inert enough to do nothing useful.

The supply chain attack vector matters most

The supply chain section of the report is the one I’d flag as most consequential for defenders. A cyber crime group called TeamPCP (also tracked as UNC6780) claimed responsibility for multiple compromises of popular GitHub repositories and GitHub Actions. Their targets included Trivy, Checkmarx, LiteLLM, and BerriAI.

The LiteLLM compromise is the standout. LiteLLM is an AI gateway utility that integrates multiple LLM providers. It’s widely used. A successful supply chain attack against it exposes API secrets across every organization using the package. Those secrets unlock direct access to internal AI systems. Once inside, attackers can use the org’s own AI tooling for reconnaissance, data exfiltration, or moving laterally.

This is the attack pattern that worries me most going forward. Traditional supply chain attacks gave attackers a foothold. AI supply chain attacks give attackers a force multiplier. The compromised AI infrastructure can be turned around to support the next phase of the operation.

Obfuscated LLM access is now industrial

Threat actors aren’t just abusing AI. They’re abusing AI access itself. The report documents an emerging ecosystem of middleware, proxy relays, and automated registration pipelines designed to bypass usage limits and safety guardrails.

GitHub-hosted scripts now automate the full LLM account registration flow. Account creation. CAPTCHA bypass. SMS verification. Status confirmation. Cancellation. The actor cycles through thousands of accounts to maintain high-volume access while subsidizing operations through trial abuse.

UNC5673 uses tools like Claude-Relay-Service to aggregate accounts across Gemini, Claude, and OpenAI. CLI-Proxy-API provides compatible API interfaces for various models. The whole stack functions as a black-market wrapper around legitimate provider infrastructure.

What defenders should take away

Reading the whole GTIG AI threat report, a few defensive priorities stand out for me.

First, AI components in your stack are now valid attack surface. If you’re running LiteLLM, OpenClaw skills, or any AI orchestration layer, treat those dependencies with the same scrutiny you’d apply to authentication libraries. Pin versions. Audit pull requests. Monitor for anomalous behavior in build environments.

Second, semantic logic flaws are now discoverable at scale. If your security review pipeline relies primarily on static analysis and fuzzing, you’re now behind. The kinds of bugs that need expert human review are exactly the kinds AI can now help adversaries find. Defenders need their own AI-augmented review workflows to compete.

Third, autonomous malware needs new detection approaches. PROMPTSPY-style behavior, where the malware queries an LLM in real time, leaves network signatures that look like legitimate API traffic. Detection has to look at process context, not just network indicators. Why is this Android app making POST requests to a Gemini endpoint?

Fourth, the supply chain threat extends to AI. Don’t assume that AI-related dependencies are safer than traditional ones just because the ecosystem is new. The opposite is true. Less maturity means weaker review processes, fewer maintainers, and more opportunity for compromise.

How Google is responding

The report closes with a section on Google’s own defensive work. Big Sleep, the AI agent developed by DeepMind and Project Zero, actively searches for unknown vulnerabilities. CodeMender uses Gemini’s reasoning to automatically fix critical code issues. The Secure AI Framework provides taxonomy for thinking about AI-specific risks like Insecure Integrated Components and Rogue Actions.

None of this is novel framing. But the combination is what matters. Google is using the same capabilities adversaries are weaponizing, in the opposite direction. The race is real and ongoing.

For anyone running a security team in 2026, this report is a required read. The shift it describes from experimentation to industrial-scale weaponization is the central story of how AI is reshaping the threat landscape. Treat it accordingly.