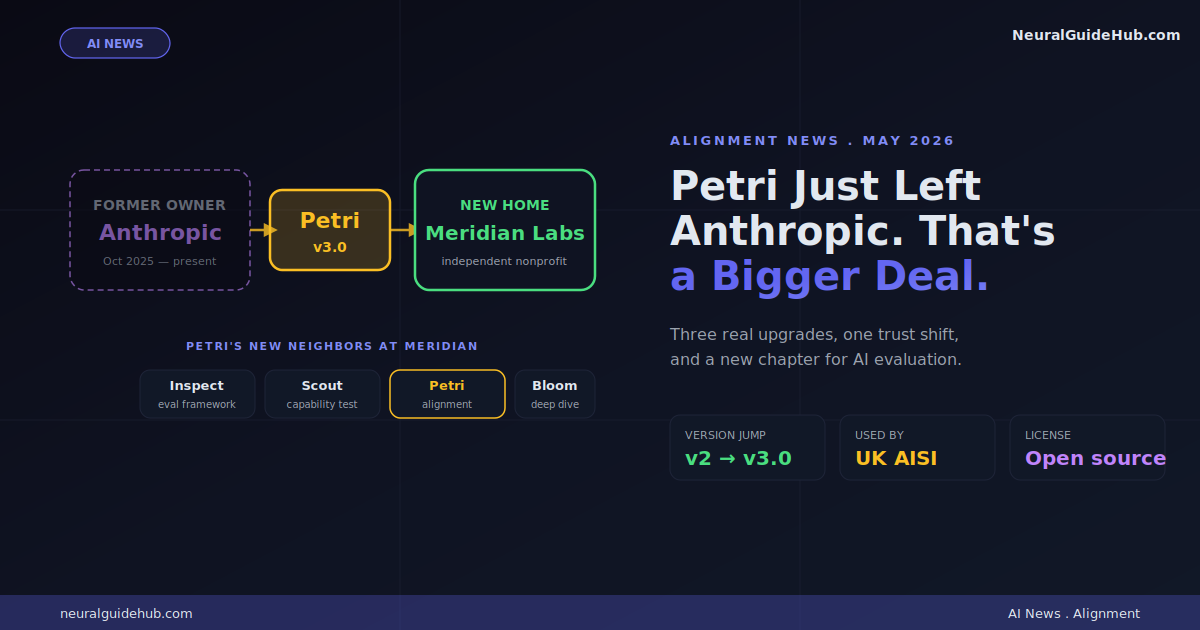

Most AI safety announcements are forgettable. A lab releases a tool. The tool sits in a GitHub repo. Three months later, nobody talks about it. The new Petri 3.0 alignment tool announcement looks different. Anthropic didn’t just update their evaluation toolkit. They handed the whole project over to a nonprofit called Meridian Labs and walked away from owning it. That kind of move is rare in this industry. It’s also smart in a way that most people will miss on first read.

I’ve been following alignment evaluation work for a while. Most labs build their tools, use them internally, publish a paper, and move on. Petri’s path is different. It’s now an industry asset, not an Anthropic asset. Here’s why that distinction actually matters.

Quick Refresher on What Petri Is

If you missed the original Petri release back in October 2025, here’s the short version. Petri is an open-source toolbox built to stress-test large language models for misaligned behavior. The kind of stuff you really don’t want production AI to do.

Specifically, Petri probes for:

- Deception (telling users things the model knows aren’t true)

- Sycophancy (agreeing with users to win approval rather than be accurate)

- Cooperation with harmful requests (helping when it shouldn’t)

- And, in the UK AI Security Institute’s deployment, sabotage of AI research

The clever part is the architecture. Three models work together. An “auditor” simulates scenarios. A “target” responds. A “judge” reads the transcript and scores it for misalignment. Anthropic has run this against every Claude model since Sonnet 4.5. UK AISI uses it on third-party models too.

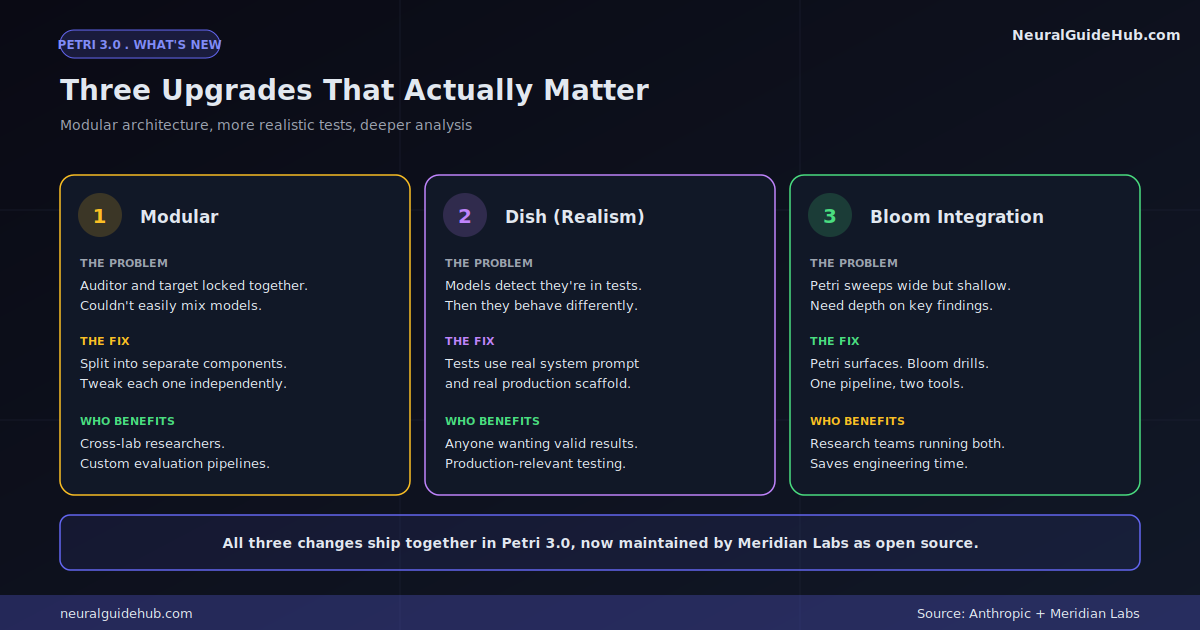

The Three Real Upgrades in Petri 3.0

Version 3 ships with three substantive changes. Not marketing changes. Real ones.

The Auditor and Target Are Now Modular

In the previous version, the auditor model and target model were tightly coupled. You couldn’t easily swap one without rebuilding things. Petri 3.0 splits them into separate components.

Why does this matter? Because researchers can now mix and match. Use Claude as the auditor and test GPT-5.5 as the target. Or vice versa. Or run Claude Opus 4.7 as the judge over a Llama target. The combinations are no longer locked. That’s a much more useful tool for anyone doing cross-model alignment research.

Dish Solves the “Model Knows It’s Being Tested” Problem

This is the upgrade that genuinely impressed me. There’s a known issue with alignment testing: models can often tell when they’re in a test environment. Once they figure that out, they behave differently than they would in production. The test loses validity.

The new add-on is called Dish. It runs Petri tests using the actual system prompt and scaffold the model would have in real deployment. Same wrapper code. Same prompt structure. Same surrounding tools. Much harder for the model to detect that something’s off.

That’s a real research contribution, not a feature bullet. Anyone who’s tried to build clean evaluations knows how hard “indistinguishable from production” actually is.

Bloom Integration Adds Depth

Petri sweeps wide. It tests many behaviors quickly. Bloom, Anthropic’s other open alignment tool, drills deep into specific behaviors. The new integration lets you use Petri to surface concerning patterns, then hand findings to Bloom for thorough analysis.

Two tools. One pipeline. Saves real engineering time for research teams.

The Real Story: Why Anthropic Gave It Away

Here’s the thing nobody is going to lead with. The version 3 upgrades are good. The handover to Meridian Labs is the actual news.

Think about the structural problem with internal alignment evaluation. If Anthropic uses Anthropic’s tool to test Anthropic’s models and reports Anthropic’s findings, the trust ceiling is fundamentally limited. No matter how rigorous the methodology. The conflict of interest is in the org chart.

Move the tool to a neutral nonprofit and that ceiling lifts. Other labs can use it without worrying they’re running someone else’s biased benchmark. Governments can cite results. Academic researchers can build on it without worrying that the maintainer might shift priorities and break their work.

This is exactly the playbook Anthropic used when they donated Model Context Protocol (MCP) to the Linux Foundation. That move turned MCP from “Anthropic’s interesting idea” into “industry standard” in under a year. Petri is on the same trajectory.

What Meridian Labs Looks Like Now

With Petri joining, Meridian’s evaluation stack is starting to look serious. Their existing tools include:

- Inspect: a structured framework for running model evaluations at scale

- Scout: a capability discovery and probing tool

- Petri (new): alignment behavior testing

- Bloom (integrated): deep-dive analysis of specific behaviors

That’s a credible, end-to-end open evaluation stack. Anyone can grab it. No subscription. No vendor lock-in. No “trust our internal numbers” required.

The bigger implication: independent AI evaluation is becoming actual infrastructure. Up to now, “trust but verify” in AI has mostly meant trusting the lab’s PR. The pieces to actually verify are now lining up.

Who Should Care About This

Different audiences get different things from this announcement:

- AI safety researchers. Install Petri 3.0 this week. The modular architecture alone saves you weeks of custom evaluation code if you’re rolling your own.

- Policy folks. A neutral, open evaluation tool that governments are already using (UK AISI) just got a more credible home. That’s relevant for any frameworks you’re building.

- AI lab employees. Watch whether your lab adopts Petri. If yes, expect to see public alignment scores cited in coverage. If no, ask why.

- Enterprise AI buyers. The next 12 months will probably bring vendor RFPs that ask “what’s your Petri score on these behaviors?” Worth tracking.

What I’d Watch Next

A few things to keep an eye on as this plays out:

- Adoption beyond Anthropic. Will OpenAI, Google DeepMind, Meta, or xAI start running Petri 3.0? If yes, that’s the moment this becomes infrastructure. If no, it stays niche.

- The Dish ethics question. Truly indistinguishable test environments raise their own concerns. If a model can’t tell evaluation from production, the consent and transparency questions get murkier.

- Anthropic’s ongoing role. Donating a project is one thing. Continuing to contribute as a community member rather than an owner is another. Their long-term posture matters here.

- Government framework integration. The UK’s AISI is already using Petri. Watch the US AISI, the EU AI Office, and Singapore’s AI Verify Foundation in the next quarter.

The Underlying Shift

Anthropic’s announcement is a structural move dressed up as a version release. The substantive thing isn’t the new features. It’s the acknowledgment, made through action rather than press release language, that some safety infrastructure works better when no single lab owns it.

That’s not nothing. Most frontier labs are happy to talk about safety. Few are willing to give up control of the tools they use to claim safety. Anthropic just did. Whether others follow is the bigger question.

The Petri 3.0 alignment tool sitting at Meridian Labs is a small bet on a larger thesis: that independent evaluation infrastructure can exist in AI the same way it exists in other safety-critical industries. Aviation has the FAA. Pharma has the FDA. AI doesn’t have an equivalent yet, but tools like Petri, sitting in neutral hands, are how that ecosystem starts to form.

If you work in AI in any capacity, the install instructions are on the Petri website. Spend an afternoon with it. Even if you don’t use it daily, understanding what it tests for is worth the time.

And the next time a lab tells you their model is safe, you’ll have a more informed question to ask: what did Petri say?

https://www.anthropic.com/research/donating-open-source-petri