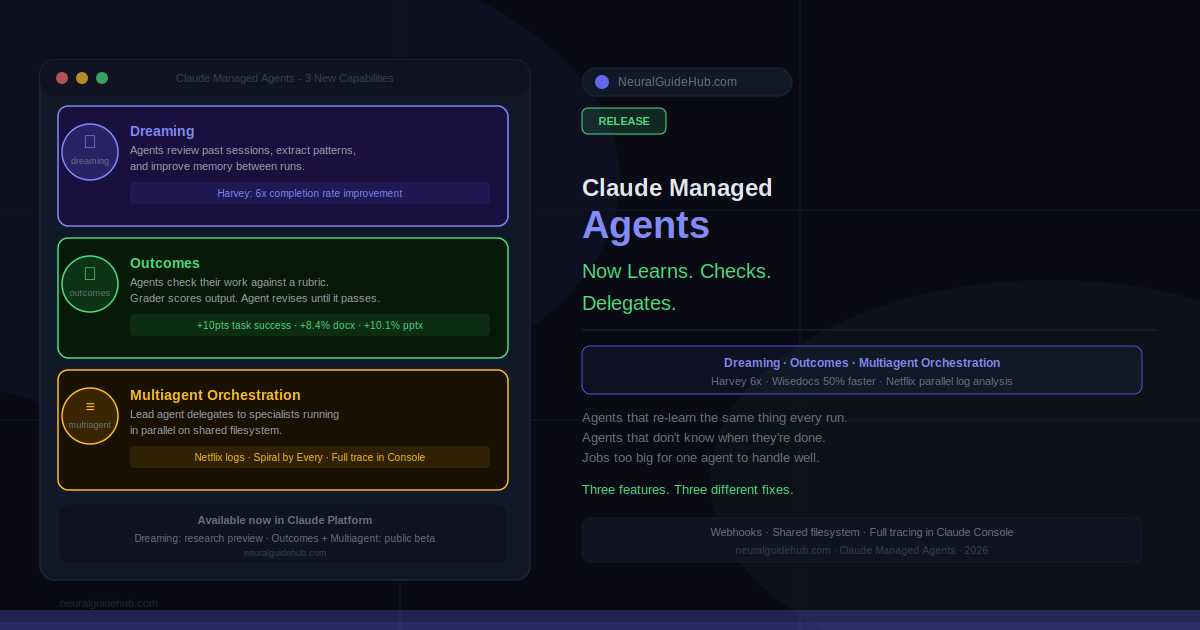

Most agent systems get smarter through prompting. You write better instructions, you get better results. That’s the lever. Claude Managed Agents just shipped three features that add different levers entirely: dreaming, which lets agents improve between sessions without human intervention; outcomes, which gives agents a rubric to check their own work against; and multiagent orchestration, which lets a lead agent delegate pieces of a job to specialists running in parallel. Each one solves a specific failure mode in production agent systems. Worth understanding what each actually does.

Dreaming: Agents That Get Better Between Sessions

Here’s a common problem. An agent figures out a workaround for a tricky tool behavior on Tuesday. Runs again on Thursday. Has no idea that workaround exists. Learns it again. Repeat indefinitely. No accumulation. No improvement over time.

Dreaming fixes that. It’s a scheduled process that reviews past sessions and memory stores, extracts patterns — recurring mistakes, workflows the agent converges on, preferences shared across a team — and curates the memory so it stays high-signal rather than just growing indefinitely. The agent picks up what it learned last week. Actually picks it up.

Harvey is the clearest example in this release. They use Claude Managed Agents for complex legal work — long-form drafting, document creation. With dreaming enabled, their agents retain filetype workarounds and tool-specific patterns between sessions. Completion rates went up roughly 6x in their testing. That number is striking. It suggests the agents were re-learning the same things repeatedly before dreaming existed.

You control how much autonomy dreaming has. It can update memory automatically, or you can review proposed changes before they apply. Research preview for now, request access through the Claude Platform.

Outcomes: Agents That Check Their Own Work

The outcomes feature is conceptually simple. You write a rubric — what success looks like for this task. A separate grader evaluates the agent’s output against that rubric in its own context window, isolated from the agent’s reasoning so it can’t be talked into accepting something that doesn’t meet the bar. When something’s off, the grader tells the agent specifically what needs to change. Agent revises. Grader checks again. Repeat until it passes.

In internal benchmarks, outcomes improved task success by up to 10 points over a standard prompting loop. Largest gains came on the hardest problems. File generation quality also improved: plus 8.4% on docx, plus 10.1% on pptx.

Wisedocs built a document quality check agent using this. Reviews now run 50% faster while staying aligned with their internal standards. The speed improvement comes from removing human review from every iteration — the agent handles the checking loop itself, humans only see final output that already cleared the bar.

Outcomes works for subjective quality too. Copy matching a brand voice. Design following visual guidelines. You write the rubric, the grader applies it. Not just pass/fail criteria — actual judgment calls, as long as you’ve described what good looks like.

Multiagent Orchestration in Claude Managed Agents

Some jobs are too large for one agent to handle well. Not because the agent isn’t capable — because the context window fills up, attention dilutes, and quality drops on the parts that get less focus. Multiagent orchestration is the answer to that.

A lead agent breaks the job into pieces and delegates each to a specialist with its own model, prompt, and tools. Specialists work in parallel on a shared filesystem. The lead agent can check back in mid-workflow because events are persistent and every agent has memory of what it’s done. Full tracing in the Claude Console shows which agent did what, in what order, and why.

Netflix’s platform team built this out for log analysis. Their agent processes logs from hundreds of builds across different sources. Changes that affect thousands of applications. The problem isn’t reading the logs — it’s finding the patterns that recur across many builds rather than flagging every individual anomaly. Multiagent orchestration lets the agent analyze batches in parallel and surface only what’s worth acting on. That’s a different kind of output than a single agent sequentially working through the same data.

Spiral by Every uses multiagent orchestration and outcomes together. Lead agent runs on Haiku — fast, cheap, handles incoming requests and quick follow-up questions. Delegates drafting to subagents on Opus. When a user asks for multiple drafts, subagents run in parallel. Every draft gets scored against a rubric of editorial principles and the user’s voice, pulled from memory. Only drafts clearing the bar get returned. The quality enforcement is automatic and the parallelism means multiple drafts don’t take multiple times as long.

What This Actually Means for Agent Development

These three features address three genuinely different problems. Dreaming handles the accumulation problem — agents that don’t carry learning forward. Outcomes handles the quality problem — agents that don’t know when they’re done. Multiagent orchestration handles the scale problem — jobs that exceed what a single agent can handle coherently.

The combination is more interesting than any one feature individually. An orchestrated system where each specialist agent uses outcomes to verify its own work, and dreaming refines what all of them learn across sessions — that’s a meaningfully different architecture than a single prompted agent with a long system prompt. The Harvey and Wisedocs numbers suggest the gap in real production use is measurable.

Dreaming is in research preview, everything else is public beta. Documentation and the Claude Console are the starting points for developers building with Managed Agents.