The honest challenge with AI and cybersecurity has never been capability. It has been access control. Give a powerful model to the wrong people and you have accelerated the attackers. Restrict it too tightly and you have kneecapped the defenders. OpenAI just published their clearest answer yet to how they plan to navigate that tension.

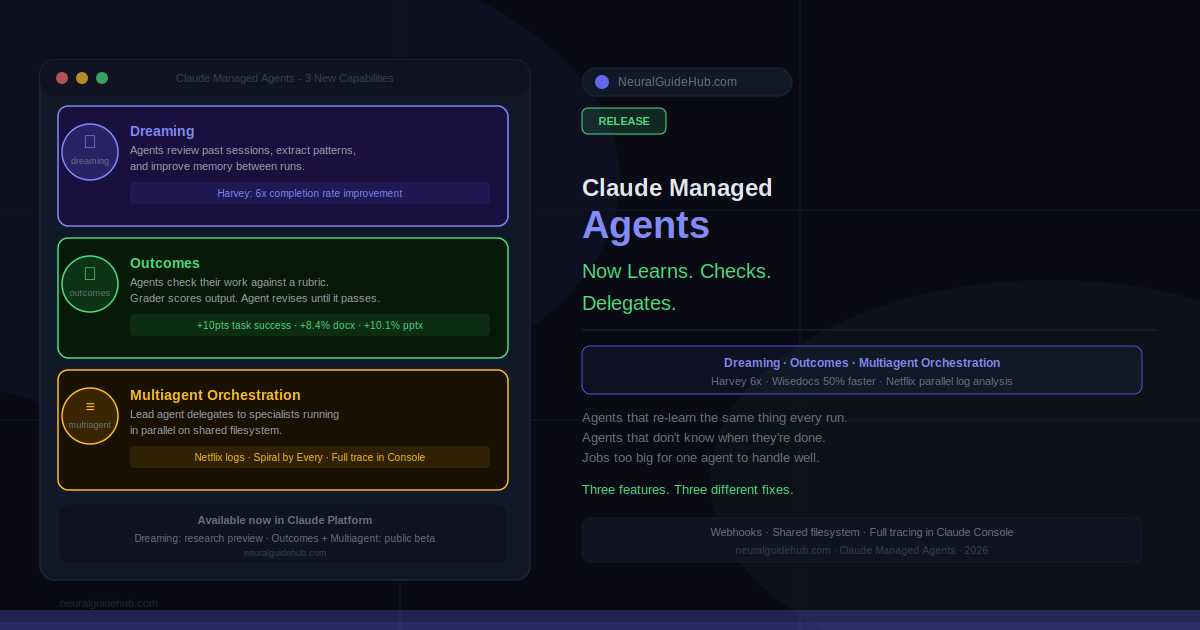

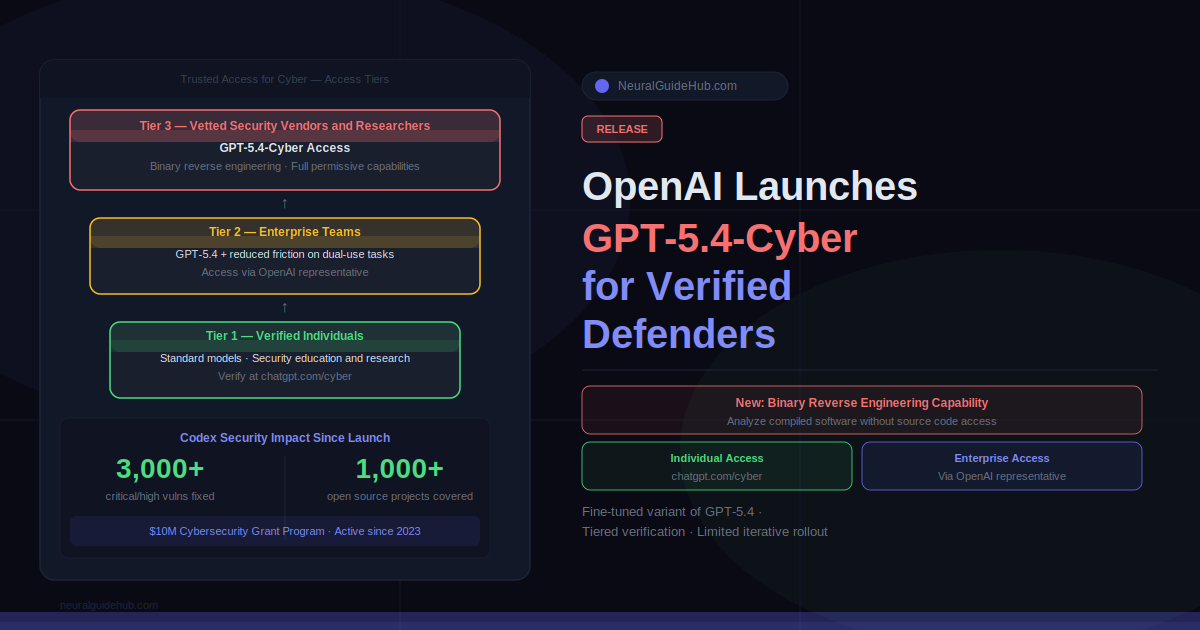

The company is expanding its Trusted Access for Cyber program to thousands of verified individual defenders and hundreds of security teams, and introducing GPT-5.4-Cyber, a variant of GPT-5.4 specifically fine-tuned for defensive cybersecurity work with fewer capability restrictions than the standard model. Binary reverse engineering. Reduced refusal boundaries for legitimate security research. Access graded by verification tier rather than blanket restriction.

This is a meaningful shift in how OpenAI is approaching dual-use AI capabilities, and the details are worth understanding carefully.

What GPT-5.4-Cyber Actually Does Differently

GPT-5.4-Cyber is not simply GPT-5.4 with a different system prompt. It is a fine-tuned variant with adjusted refusal boundaries calibrated specifically for cybersecurity workflows. The most significant new capability is binary reverse engineering, which allows security professionals to analyze compiled software for malware potential, vulnerabilities, and security robustness without needing access to source code.

That last part matters. Source code access is a luxury in real-world security work. Analysts frequently work with compiled binaries, closed-source software, and third-party components where source is unavailable. A model that can reason about binary artifacts meaningfully expands what AI-assisted security analysis can actually do in production environments.

The model also lowers friction on tasks that standard GPT-5.4 handles cautiously, including security education, defensive programming, and responsible vulnerability research. Teams in the highest verification tiers get access to the more permissive version. Lower tiers get reduced safeguard friction on standard models without the full capability expansion.

How the Trusted Access for Cyber Tiers Work

Access to TAC is structured in tiers based on identity verification and organizational accountability. The entry point is straightforward: individual users verify identity at chatgpt.com/cyber. Enterprises request team access through their OpenAI representative.

Everyone approved through the base process gets access to existing models with reduced friction on dual-use security tasks. That covers the majority of legitimate defensive use cases: security training, code review, vulnerability research, defensive tooling development.

GPT-5.4-Cyber access sits at the higher tiers, reserved for vetted security vendors, organizations, and researchers who go through additional authentication steps. OpenAI is starting this with a limited, iterative deployment rather than a broad release, which is consistent with how they handled earlier high-capability model rollouts.

One access limitation worth noting: the more permissive model comes with restrictions around Zero-Data Retention use cases. ZDR is particularly relevant for security teams with strict data handling requirements. Developers and organizations accessing through third-party platforms may face additional constraints because OpenAI has less direct visibility into the user context and request purpose in those environments.

The Cybersecurity Track Record Behind This Release

OpenAI has been building toward this for longer than the announcement implies. Cyber-specific safety training started with GPT-5.2. Additional safeguards came with GPT-5.3-Codex. GPT-5.4 was the first model OpenAI classified as “high” cyber capability under their Preparedness Framework.

In parallel, they launched a $10 million Cybersecurity Grant Program, reached over 1,000 open source projects through Codex for Open Source providing free security scanning, and launched Codex Security in private beta six months ago. Since Codex Security’s recent broader launch, it has contributed to over 3,000 critical and high severity fixed vulnerabilities across the ecosystem.

That last number is concrete and verifiable. It gives some sense of what automated AI-assisted security tooling operating at scale actually produces in terms of real-world impact.

The Dual-Use Problem OpenAI Is Trying to Solve

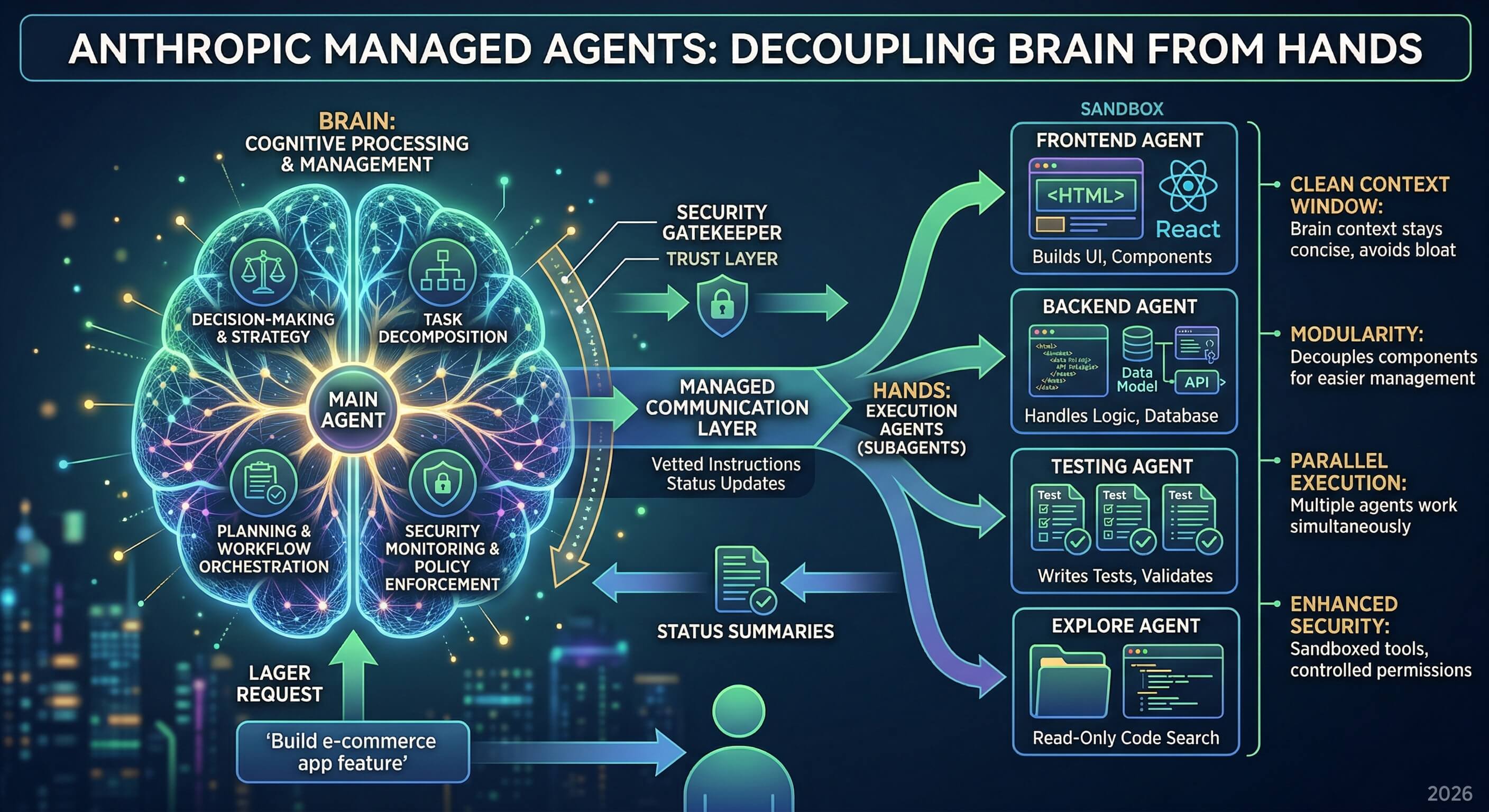

The core tension in any cyber-capable AI model is that the same capability that helps a defender analyze malware also helps an attacker build it. OpenAI’s published answer to this is that risk is not defined by the model alone. It depends on who is using it, what trust signals exist around that user, and what level of access they have been granted.

That framing shifts the access control problem from “what can this model do” to “who has verified access to which capability tier.” It is a more operationally complex approach than blanket restriction, but it is also more honest about how dual-use technology actually works in practice. Security researchers need to probe the same attack surfaces that attackers use. Restricting that capability uniformly would harm defenders more than attackers, who have other means.

OpenAI explicitly acknowledged this: “We don’t think it’s practical or appropriate to centrally decide who gets to defend themselves.” The TAC program is their mechanism for verifying defenders at scale without manual case-by-case decisions.

What This Means for Security Teams in Practice

For enterprise security teams already using OpenAI through commercial agreements, the path to TAC access runs through their OpenAI representative. The process is designed to be low-friction for legitimate security organizations. Teams doing penetration testing, vulnerability research, threat intelligence, or security tooling development are the clear target audience.

For individual security researchers, the identity verification at chatgpt.com/cyber is the entry point. OpenAI uses strong KYC and identity verification as the criteria for expanded access, which is a more objective standard than discretionary manual review.

OpenAI also signaled clearly that this is not the final state. More powerful models are coming, and the expectation is that GPT-5.4-Cyber-level capabilities will become insufficient as model capabilities increase. The framework they are building now, tiered access, verified identity, iterative deployment, is designed to scale with future releases rather than requiring a complete rebuild each time.

For security teams evaluating whether to pursue TAC access, the binary reverse engineering capability alone is likely worth the verification process for organizations doing active threat analysis work. The reduced friction on dual-use research tasks is useful but incremental. The new binary analysis capability is genuinely new ground.

https://openai.com/index/scaling-trusted-access-for-cyber-defense/