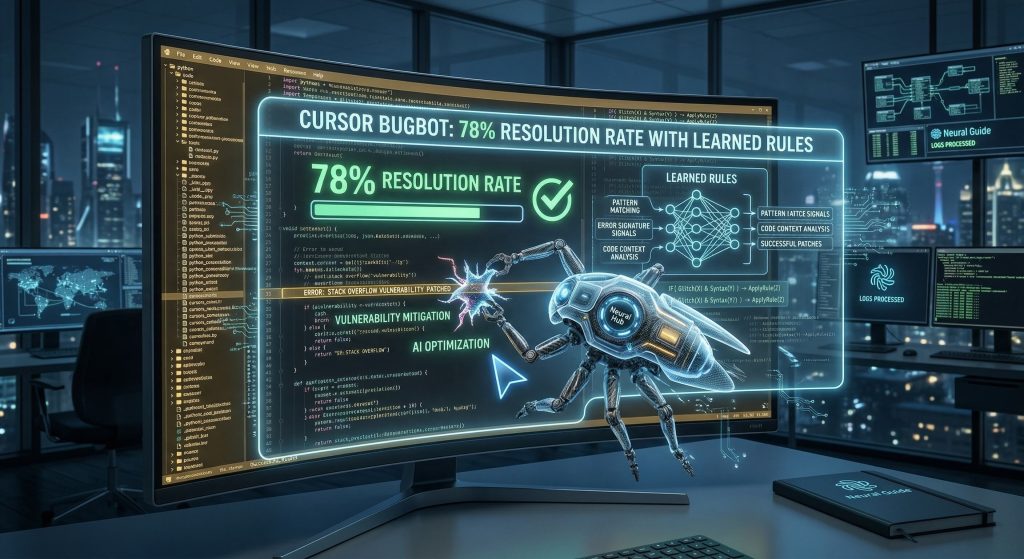

Cursor’s Bugbot launched out of beta in July 2025 with a 52% resolution rate – meaning roughly half the bugs it flagged were actually fixed before the PR merged. The other half were false positives. That number alone put it ahead of most competitors, but it also left a lot of room to improve.

Nine months later, that rate is nearing 80%. More importantly, it is now 15 percentage points ahead of the next-closest AI code review tool on the market. That gap did not close through better prompting or model upgrades alone. It closed because Bugbot learned how to teach itself.

The resolution rate gap is significant

To understand why this matters, you need to understand how resolution rate works as a metric. For every comment Bugbot posts on a pull request, the question is whether a developer acts on it before the PR merges. A comment that gets ignored – or actively dismissed – is a false positive. High resolution rate means the tool is finding real problems, not generating noise.

The numbers across competing tools tell a clear story.

Others

The methodology matters here: Cursor analyzed public repositories only, used an LLM judge to determine whether each comment was addressed before merge, and ran the comparison across tens of thousands of PRs per tool. Bugbot’s 50,310 analyzed PRs gives the figure statistical weight.

Why offline experiments alone hit a ceiling

Until recently, every improvement to Bugbot came through offline experimentation. The team would tweak the model, test whether the change improved resolution rate on a held-out dataset, and ship it if the numbers moved in the right direction. That process worked – it is how they got from 52% to the low 70s.

But it left a massive signal source completely untapped. Bugbot reviews hundreds of thousands of pull requests every day. Every one of those reviews is a natural experiment: does the developer act on the finding, or not? That behavioral signal is richer and more current than any offline test set, and it was going entirely to waste.

Bugs found

The challenge was building a system to harvest it systematically. Reactions, replies, and reviewer comments all carry different types of signal – and extracting useful rules from that signal without introducing noise required a feedback loop with its own evaluation layer.

How learned rules work

Every merged PR now feeds three types of signals back into Bugbot’s learning system.

Reactions to Bugbot comments are the clearest signal. A downvote tells the system directly that the finding was not useful. Replies to Bugbot comments carry richer information – when a developer explains what was wrong with a suggestion or how it could have been better, that explanation gets processed into candidate rules. Comments from human reviewers flag issues that Bugbot missed entirely, which tells the system what to look for in the future.

Bugbot learning process

Bugbot processes these signals into candidate rules that it continues to evaluate against incoming PRs. Candidate rules do not immediately become active – they have to accumulate enough consistent signal before being promoted. That evaluation layer is what keeps noise from compounding into bad rules.

Active rules influence future reviews as additional instructions. They help Bugbot focus on issues specific to a codebase, understand business context that would not be obvious from the code alone, and avoid patterns it has learned tend to generate false positives in a particular repo. If an active rule starts generating consistent negative signal, it gets disabled. You can also edit or delete rules directly from the Cursor dashboard.

The scale of adoption

Since launching learned rules in beta, more than 110,000 repositories have enabled learning, generating more than 44,000 learned rules across the ecosystem. That is a significant amount of institutional knowledge being encoded at the repo level – knowledge that carries forward into every future review.

The implication is compounding: repos that have been running with learned rules for longer accumulate more precise rules, which improves resolution rate, which generates more reliable signal for future rule creation. The gap between early adopters and late adopters will widen over time.

What this means for AI code review

The conventional approach to improving AI tools is model-centric: better base model, better prompts, better fine-tuning. Bugbot’s self-improvement mechanism is different. It treats the live production environment as a continuous training signal, and it encodes what it learns into rules that persist across sessions.

This is closer to how experienced developers actually improve over time – not through periodic retraining, but through accumulated judgment about what matters in a specific codebase. The 80% resolution rate is the current ceiling for that approach. It probably is not the final one.

Learned rules are enabled and managed in the Cursor Dashboard, where you can also run a backfill across recent PRs to jumpstart the learning process on repos with existing history.