There is a fundamental tension in building agent harnesses: the assumptions you encode today become the liabilities you debug tomorrow. Anthropic’s engineering team just published a detailed breakdown of how they rethought the architecture of Managed Agents – their hosted service for long-horizon agent work – and the core insight is one that anyone building on top of language models should sit with for a while.

Harnesses go stale. The scaffolding you build around a model reflects what that model cannot do on its own. As the model improves, those workarounds become dead weight – or worse, they actively constrain what the system can do.

The Context Anxiety Example

The clearest illustration of this problem comes early in the post. The team discovered that Claude Sonnet 4.5 would wrap up tasks prematurely as it sensed its context limit approaching – a behavior they called “context anxiety.” Their fix was to add context resets to the harness. Reasonable response to a real problem.

Then they ran the same harness on Claude Opus 4.5. The behavior was gone. The resets were now dead weight baked into production infrastructure. The workaround had outlived the problem it was solving.

This is not a corner case. It is the normal trajectory of any system built around a rapidly improving model. The question is whether your architecture makes it easy or painful to remove those assumptions when they expire.

The Pets vs. Cattle Problem

The first generation of Managed Agents put everything in a single container: session, harness, and sandbox all sharing the same environment. File edits were direct syscalls. No service boundaries to design. Clean and simple.

The problem was infrastructure: the team had adopted a pet. In the pets-vs-cattle framing, a pet is a named, hand-tended server you cannot afford to lose. Cattle are interchangeable. When a single container holds the session, the harness, and the sandbox, that container becomes a pet by definition. A failure anywhere means the entire session is lost.

Debugging was particularly painful. The only window into a stuck session was the WebSocket event stream, which could not distinguish between a harness bug, a packet drop, or the container going offline – all three looked identical from the outside. Opening a shell inside the container was the only option, but because that same container held user data, it was not a real option. The team essentially could not debug their own system.

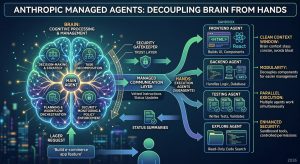

Decoupling Brain from Hands

The solution was to separate what they called the “brain” – Claude and its harness – from the “hands” (sandboxes and tools) and the “session” (the event log). Each became an independent interface with minimal assumptions about the others. Each could fail or be replaced without taking down the rest.

The harness no longer lives inside the container. It calls the sandbox the same way it calls any other tool: a name and input go in, a string comes back. The container became cattle. If it dies, the harness catches the failure as a tool-call error and passes it to Claude. If Claude retries, a new container can be initialized from a standard recipe. No nursing required.

Harness recovery works the same way. Because the session log lives outside the harness, nothing in the harness needs to survive a crash. When one fails, a new instance boots, calls wake(sessionId), fetches the event log with getSession(id), and resumes from the last event. The harness is stateless. Failure becomes a recoverable condition rather than a catastrophic one.

The Security Boundary

The coupled design had a security problem that is worth understanding clearly. When the harness and the sandbox shared a container, credentials lived next to Claude’s generated code. A prompt injection only had to convince Claude to read its own environment. Once an attacker has those tokens, they can spawn unrestricted sessions and delegate work to them.

The structural fix was to ensure credentials are never reachable from the sandbox where Claude’s generated code runs. For Git, access tokens are used during sandbox initialization to clone the repo and wire into the local remote – push and pull work without the agent ever handling the token. For custom tools, OAuth tokens live in a secure vault outside the sandbox. Claude calls tools through a dedicated proxy that fetches credentials from the vault. The harness never sees any of it.

The Session Is Not Claude’s Context Window

One of the more technically interesting sections deals with long-horizon context management. Long tasks exceed Claude’s context window, and the standard approaches – compaction, trimming, summaries – all involve irreversible decisions about what to keep. If you discard the wrong tokens, you cannot get them back.

In Managed Agents, the session log serves as a context object that lives outside the context window. The getEvents() interface lets the harness interrogate context by selecting positional slices of the event stream – picking up from the last read position, rewinding before a specific moment, or rereading context before an action. Any fetched events can be transformed in the harness before being passed to Claude.

The separation of durable storage (session) from context management (harness) is deliberate. The team cannot predict what specific context engineering future models will require. Keeping that logic in the harness means it can change freely without touching the session interface.

Many Brains, Many Hands

Decoupling had two significant performance and scalability payoffs.

On the brain side: when the harness lived inside the container, inference could not start until the container was provisioned. Every session, including ones that would never touch a sandbox, paid the full container setup cost upfront. After decoupling, containers are provisioned via tool call only when needed. Inference starts as soon as the orchestration layer pulls pending events from the session log. The team reports p50 time-to-first-token dropped roughly 60 percent, and p95 dropped over 90 percent.

On the hands side: earlier models were not capable of reasoning about multiple execution environments and deciding where to send work. A single container was the right constraint for the capability level. As model intelligence scaled, the single container became the limitation – a failure there lost state for every hand the brain was reaching into. Once each hand is just a tool call, brains can operate across many hands simultaneously. And because no hand is coupled to any brain, brains can pass hands to one another.

The Underlying Principle

The architecture draws an explicit analogy to operating systems. OS abstractions like process and file were general enough to outlast the hardware they originally virtualized. The read() command is agnostic as to whether it is accessing a 1970s disk pack or a modern SSD. The abstractions stayed stable while the implementations changed freely.

Managed Agents applies the same pattern to agent infrastructure. Session, harness, and sandbox are virtualized interfaces. The team is opinionated about the shape of those interfaces, not about what runs behind them. Claude Code is an excellent harness for many tasks. Task-specific harnesses excel in narrow domains. Managed Agents can accommodate either – or whatever harnesses turn out to be right for models that do not exist yet.

That last point is the design goal stated explicitly: build a system for “programs as yet unthought of.” It is a harder target than building for today’s requirements, but it is the only target that makes sense when the underlying model is improving as fast as Claude is.