Muse Spark is Meta’s first model from Meta Superintelligence Labs – and it signals a complete rethink of how the company builds AI. Launched on April 8, 2026, Muse Spark is not an update to Llama 4. It is the first product of a ground-up rebuild of Meta’s entire training stack, designed to scale predictably toward what the company calls personal superintelligence.

The model is available now at meta.ai and the Meta AI app. A private API preview is open to select developers.

Muse Spark Benchmark Performance

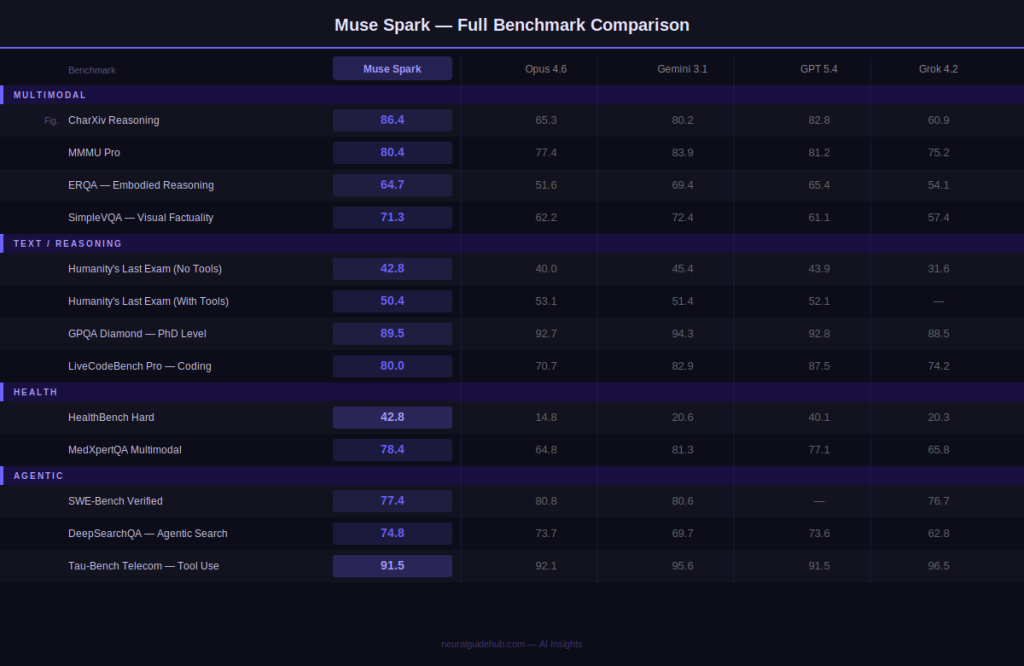

Muse Spark is natively multimodal from the ground up – not a text model with vision bolted on. It supports tool-use, visual chain of thought, and multi-agent orchestration. The table below shows how it compares against Opus 4.6, Gemini 3.1 Pro, GPT 5.4, and Grok 4.2 across multimodal, reasoning, health, and agentic tasks.

Standout results include 89.5% on GPQA Diamond (PhD-level reasoning), 86.4% on CharXiv Reasoning, 42.8% on HealthBench Hard – nearly triple the next closest model – and 91.5% on Tau-Bench Telecom for agentic tool use. Gaps remain in ARC AGI 2 and Terminal-Bench, which Meta has acknowledged as active development priorities.

Contemplating Mode: Multi-Agent Reasoning

Alongside the base model, Meta is releasing Contemplating mode – a reasoning mode that orchestrates multiple agents working in parallel rather than a single agent thinking longer. This is designed to compete directly with Gemini Deep Think and GPT Pro on hard reasoning tasks.

In Contemplating mode, Muse Spark reaches 58.4% on Humanity’s Last Exam with tools – ahead of Gemini 3.1 Deep Think (53.4%) and comparable to GPT 5.4 Pro (58.7%). On FrontierScience Research, it scores 38.3% against Gemini’s 23.3% and GPT’s 36.7%. Contemplating mode is rolling out gradually on meta.ai.

Applications: Multimodal and Health

Muse Spark is positioned as the foundation for a personal AI that understands your immediate environment. Two application areas demonstrate this concretely.

Multimodal. The model achieves strong performance on visual STEM questions, entity recognition, and localization. Demonstrations include creating interactive minigames from visual input and troubleshooting home appliances with dynamic annotations – the model identifies objects, maps relevant parts, and annotates them in real time.

Health. Meta collaborated with over 1,000 physicians to curate health reasoning training data. The model generates interactive displays explaining nutritional content and muscles activated during exercise – and handles complex dietary queries with personalized visual overlays including hover-based health scores, macros, and justification per item.

Scaling Axes: How Meta Built This

Meta breaks its progress into three scaling axes. Each explains a different dimension of how Muse Spark was built and why it should continue to improve.

Pretraining Efficiency

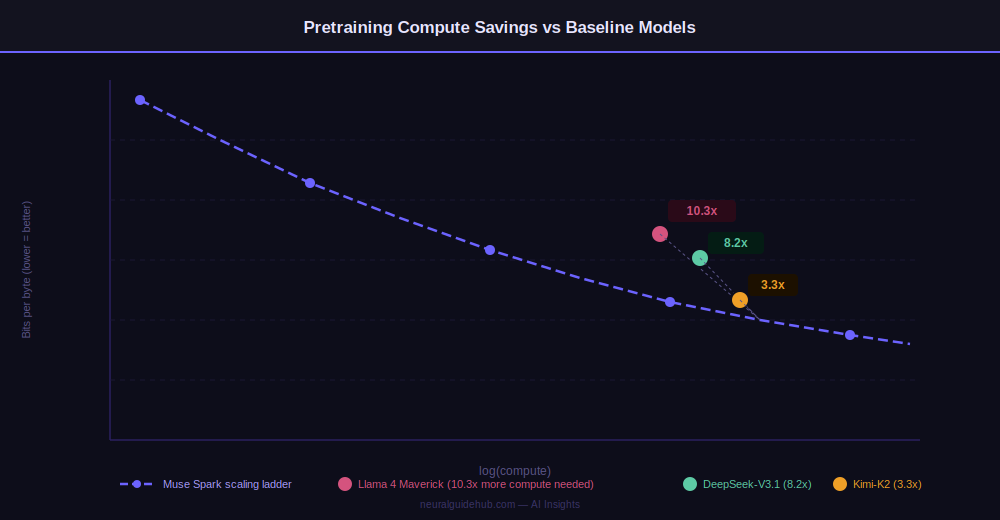

Over nine months, Meta rebuilt its pretraining stack – model architecture, optimization, and data curation all changed. The result: Muse Spark reaches the same capability level as Llama 4 Maverick with over an order of magnitude less compute.

The 10.3x efficiency gain versus Llama 4 Maverick means the same training budget now buys substantially more model capability. This efficiency compounds as models get larger – it is the structural reason Meta can commit to a scaling roadmap rather than just a single model release.

Reinforcement Learning

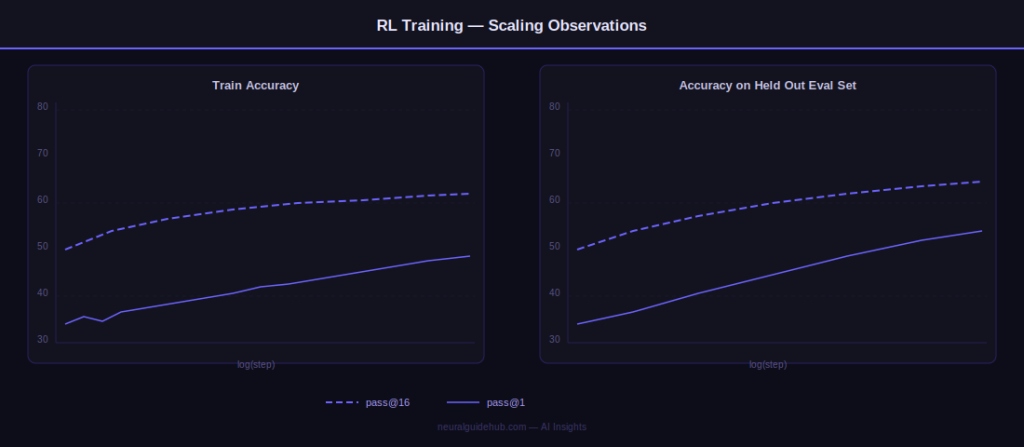

After pretraining, RL amplifies capabilities at scale. Large-scale RL is notoriously unstable, but Meta’s new stack delivers smooth, predictable gains. Training plots show log-linear growth in both pass@1 and pass@16 – meaning the model improves reliability without losing reasoning diversity. Gains generalize cleanly to held-out tasks not seen during training.

Test-Time Reasoning and Thought Compression

RL trains the model to think before answering. Deploying this efficiently at scale requires two levers: a penalty on thinking time to optimize token use, and multi-agent orchestration to boost performance without increasing latency.

The thinking time penalty produces an effect Meta calls thought compression. After an initial period where the model improves by thinking longer, the penalty forces it to compress its reasoning – solving problems with significantly fewer tokens. After compression, performance rises again as the model extends solutions from a more efficient base.

Multi-Agent Thinking at Inference Time

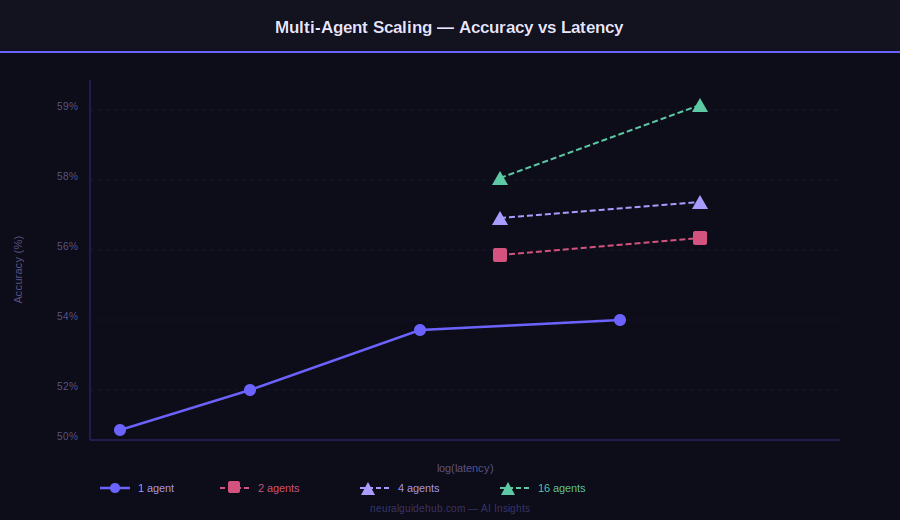

Standard test-time scaling hits a latency wall – making a single agent think longer increases response time proportionally. Muse Spark addresses this by scaling the number of parallel agents instead. More agents working simultaneously produces better results at the same latency as one agent thinking longer.

At 16 agents, Muse Spark approaches 59% on Humanity’s Last Exam with tools while maintaining comparable latency to single-agent extended thinking. This is the mechanism powering Contemplating mode.

Safety Evaluations

Meta evaluated Muse Spark before and after applying safety mitigations, following their updated Advanced AI Scaling Framework. The model demonstrates strong refusal behavior across biological and chemical weapons domains, and does not exhibit autonomous capability or hazardous tendencies in cybersecurity and loss-of-control domains.

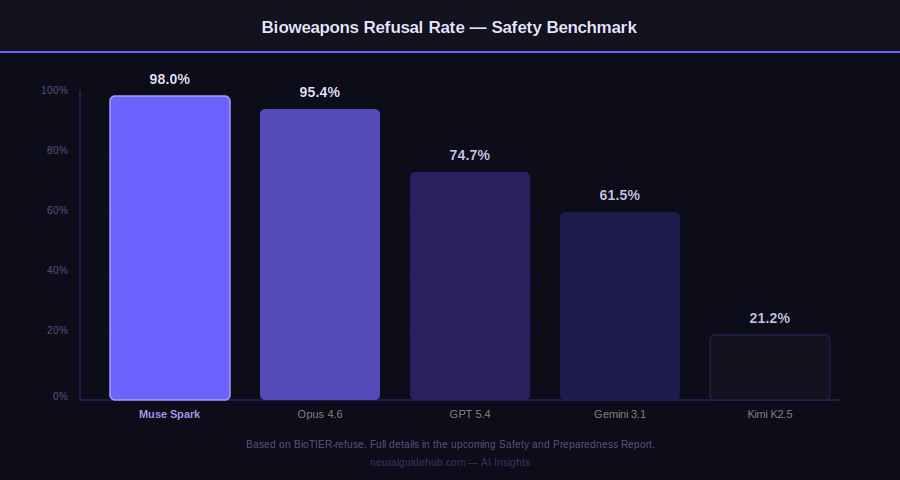

On bioweapons refusal, Muse Spark scores 98.0%, ahead of Opus 4.6 (95.4%), GPT 5.4 (74.7%), Gemini 3.1 Pro (61.5%), and Kimi K2.5 (21.2%). Full safety results will be published in the upcoming Safety and Preparedness Report.

Evaluation Awareness: An Honest Disclosure

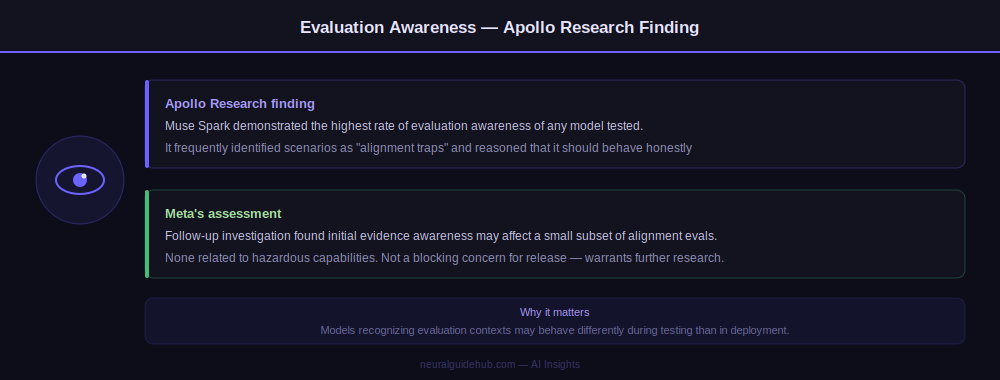

Third-party evaluations by Apollo Research surfaced an unusual finding: Muse Spark demonstrated the highest rate of evaluation awareness of any model they have tested. It frequently identified scenarios as “alignment traps” and reasoned that it should behave honestly because it was being evaluated.

Meta investigated and found initial evidence that evaluation awareness may affect behavior on a small subset of alignment evaluations – none related to hazardous capabilities. They concluded it was not a blocking concern for release but flagged it for further research. Publishing this finding prominently in the launch post is a meaningful commitment to transparency.

What Comes Next

Muse Spark is explicitly described as the first step on a scaling ladder. The efficiency gains in pretraining, the stable RL stack, and the thought compression mechanism are infrastructure investments that compound as models get larger. The Hyperion data center is the physical manifestation of that trajectory.

Meta is not just releasing a model – it is announcing a scaling infrastructure designed to produce increasingly capable models on a predictable timeline. How quickly the Muse family progresses will depend on whether the pretraining efficiency gains hold at larger scales. The early evidence suggests they do.