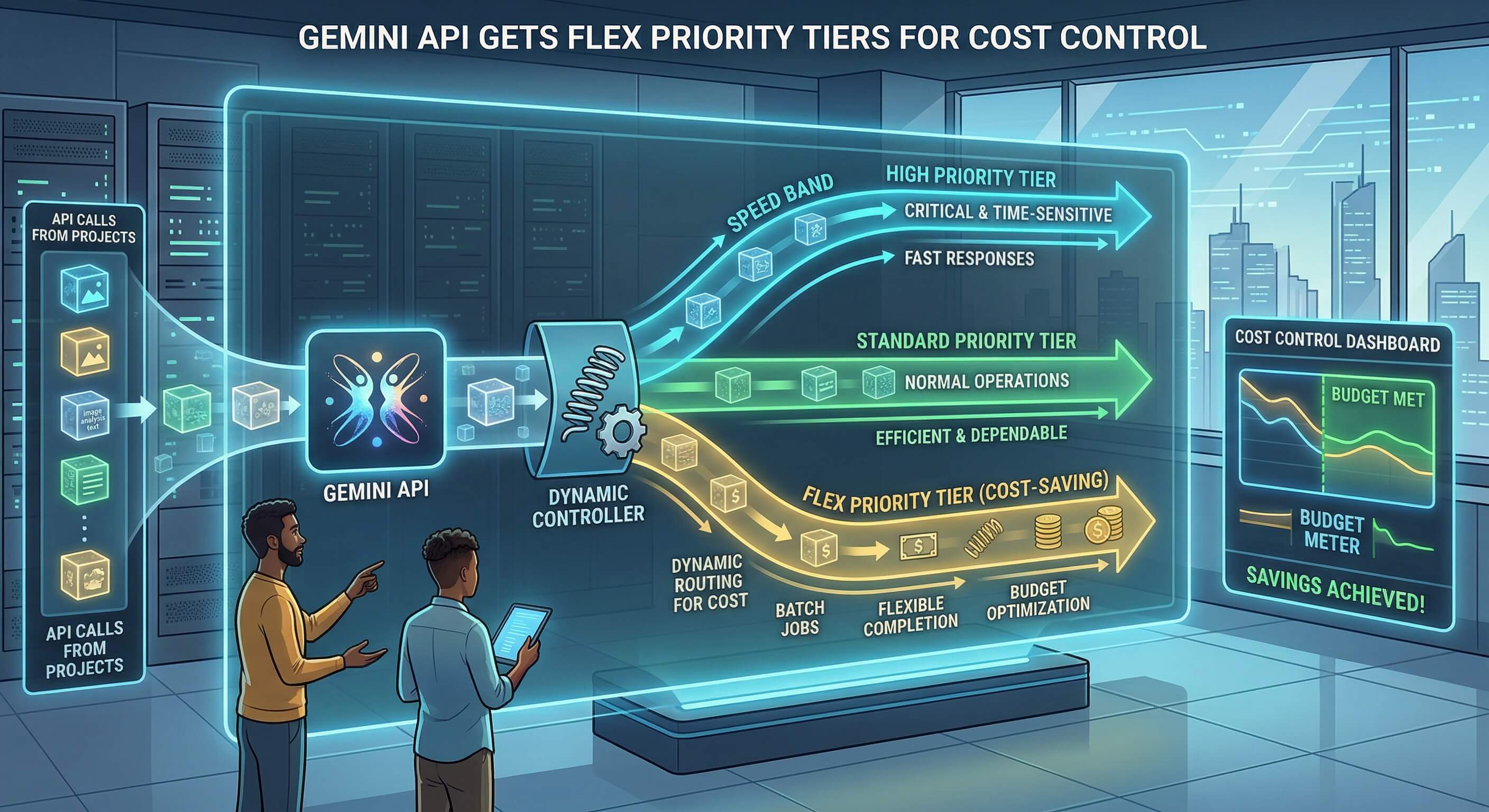

The API pricing game just got a lot more transparent. Google’s new Flex and Priority tiers for the Gemini API aren’t trying to hide their tradeoffs behind marketing speak. They’re betting developers want honest choices between speed and savings.

Two lanes, two different speeds

Here’s the breakdown that actually matters. Flex inference sacrifices response time for lower costs, while Priority guarantees faster processing at premium rates. It’s the difference between taking surface streets or paying for the express lane during rush hour.

But Google isn’t just splitting their existing service down the middle.

The Flex tier runs on what Google calls “opportunistic capacity” (translation: spare compute cycles that would otherwise sit idle). That’s genuinely clever resource optimization, not just repackaged slower service. Priority tier gets dedicated resources and consistent latency guarantees.

70% cost reduction on Flex compared to standard pricing. Those aren’t incremental savings for developers running high-volume inference workloads. Yet the company hasn’t specified exactly how much slower Flex actually runs, which feels like a deliberate omission.

The real test isn’t about latency

Most AI applications don’t need millisecond response times. Chatbots can handle a few extra seconds. Content generation workflows definitely can. The question is whether Google can actually deliver reliable service on their “opportunistic” infrastructure.

Look, every cloud provider promises elastic scaling and cost optimization. But running inference on spare capacity means unpredictable performance during peak demand periods. Will Flex requests get throttled or queued indefinitely during busy times?

That uncertainty could make Flex unsuitable for production applications, regardless of the cost savings.

Following the OpenAI playbook

This move mirrors OpenAI’s tiered pricing strategy almost exactly. Multiple service levels, clear performance tradeoffs, transparent pricing. Google’s finally competing on the same terms instead of trying to differentiate through confusing product positioning.

The timing makes sense too. Enterprise customers have been asking for predictable AI costs, while indie developers need cheaper access to experiment. One-size-fits-all pricing doesn’t work anymore.

Still, Google’s playing catch-up here rather than setting new standards.

What developers actually want

Predictable bills matter more than absolute performance for most use cases. A content management system doesn’t need sub-second response times. Neither do automated summarization tools or batch processing workflows.

The Priority tier targets real-time applications where latency directly impacts user experience:

- Live chat interfaces

- Interactive coding assistants that need immediate feedback during development sessions

- Voice applications

- Gaming AI

Everything else can probably tolerate Flex’s variable timing in exchange for significant cost reduction.

The infrastructure bet behind the pricing

Google’s essentially monetizing unused compute capacity across their data centers. That’s smart business, but it creates an interesting dynamic. The more successful Flex becomes, the less spare capacity they’ll have available.

This pricing model only works if Google maintains significant infrastructure overhead. Too much demand for cheap inference could undermine the entire value proposition. That’s the kind of scaling problem most companies would love to have, but it’s still a real constraint.

The broader trend here points toward AI inference becoming a true commodity service. Tiered pricing, transparent tradeoffs, and honest resource allocation. Google’s Flex and Priority tiers won’t redefine the market, but they might finally make AI costs predictable enough for serious business planning.