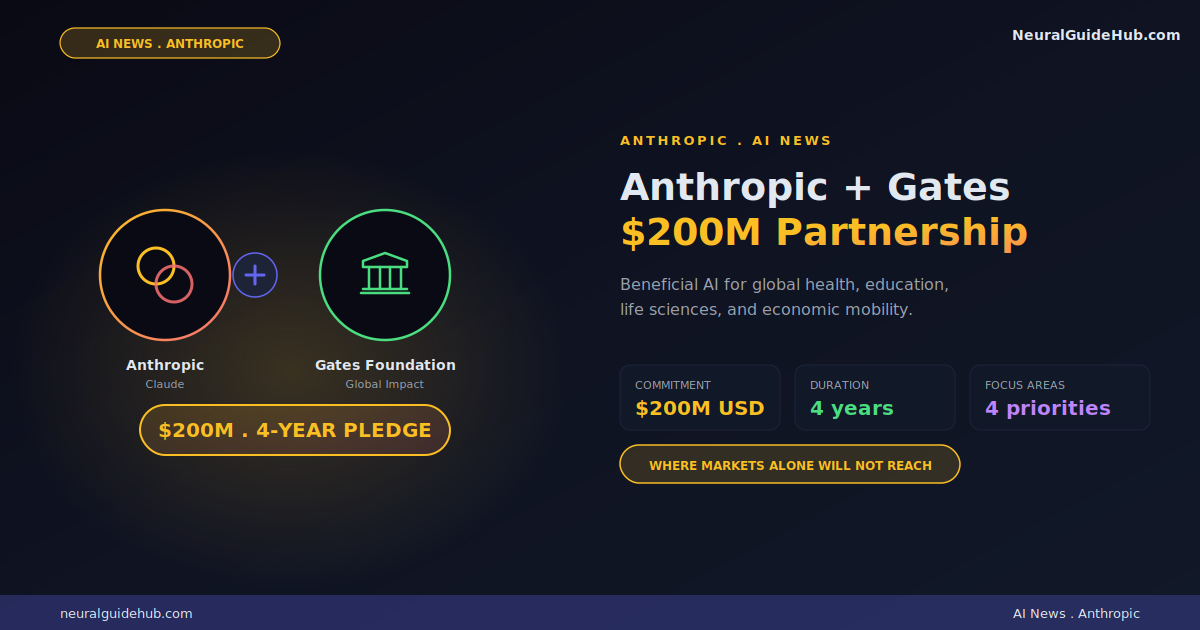

Anthropic just announced a four-year commitment with the Gates Foundation worth $200 million. The mix is grant funding, Claude usage credits, and technical support, directed at programs in global health, life sciences, education, and economic mobility. The Anthropic Gates Foundation partnership is the largest beneficial deployment commitment Anthropic has made publicly, and the structure is more interesting than the headline number suggests.

I’ve watched the AI for good space evolve over the past three years. Most announcements in this category overpromise. They commit money, attach a glossy press release, and then the actual deployment never finds a path to real impact. The Anthropic announcement reads differently. It names specific diseases, specific regions, and specific institutional partners. That level of specificity usually means someone has done the planning work before the press release went out.

What the $200M actually covers

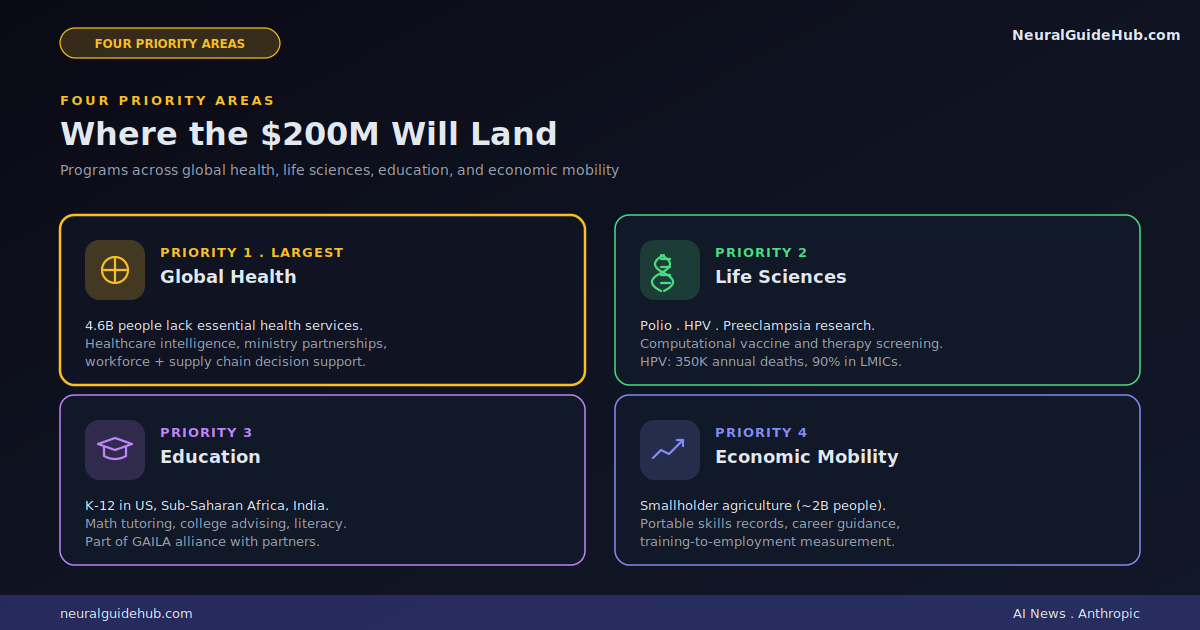

The commitment splits across four priority areas. Global health and life sciences gets the largest share. Education sits in the middle. Economic mobility rounds out the program. All of it runs through Anthropic’s Beneficial Deployments team, which is the group that provides Claude credits and engineering support to mission-driven partners.

The team also produces what Anthropic calls AI-related public goods. Public health datasets. Evaluation benchmarks. Discounted Claude access for nonprofits and education institutions. The $200M isn’t just a cash transfer. It’s a programmatic commitment to keep producing tools that have a public benefit beyond the partnership itself.

For a frontier AI lab, that’s a meaningful structural choice. Most AI for good programs sit alongside the core business. This one is run by a dedicated team with permanent infrastructure. The difference matters for execution.

Global health and life sciences gets the biggest slice

The largest part of the commitment focuses on health outcomes in low- and middle-income countries. The framing here is important. Around 4.6 billion people lack access to essential health services. That’s not a market AI companies have been serving. The Anthropic deployment is explicitly aimed at gaps that markets alone will not fill.

Three concrete workstreams stand out. The first is healthcare intelligence: building connectors, benchmarks, and evaluation frameworks so researchers and governments can measure how AI systems actually perform on healthcare tasks. That’s the unsexy infrastructure work that almost always gets skipped in flashier deployments.

The second is direct partnership with health ministries on workforce deployment, supply chain management, and outbreak detection. This is where AI assistance actually meets operational reality. Health ministries make staffing and logistics decisions every day. Better data plus better reasoning support could change those decisions for the better.

The third is research on high-burden and neglected diseases. The starting list is polio, HPV, and eclampsia/preeclampsia. HPV alone causes around 350,000 deaths annually, with 90% of those in low- and middle-income countries. The plan is to use Claude to screen vaccine and therapy candidates computationally before pre-clinical development. That kind of screening can compress drug development timelines significantly.

The IDM forecasting integration

Buried in the global health section is a partnership with the Institute for Disease Modeling, which sits inside the Gates Foundation. IDM produces forecasts that drive treatment deployment decisions for diseases like malaria and tuberculosis. The Claude integration aims to make those forecasts more accessible to practitioners who aren’t modeling specialists.

This is the kind of detail I’d flag as quietly important. Disease modeling is a specialist field. Most field workers and health ministry officials can’t run the models themselves. If Claude can act as a translation layer between specialist forecasts and operational decision-making, that closes a real gap. It also helps IDM develop more predictive models because more feedback loops can flow back to the modeling team.

Education: K-12 plus foundational literacy

The education side of the partnership covers K-12 students in the US, sub-Saharan Africa, and India. The first deliverables are public goods like model benchmarks, datasets, and knowledge graphs. The framing is that AI tools for math tutoring, college advising, and curriculum design need infrastructure before they can be effective at scale.

In the US, Claude will power evidence-based tutoring and career guidance tools for students moving into the workforce. In sub-Saharan Africa and India, the focus shifts to foundational literacy and numeracy. These are different problems with different deployment requirements. The fact that the partnership acknowledges that distinction is a good sign.

Anthropic is also joining the broader Global AI for Learning Alliance (GAILA) alongside the Gates Foundation and other partners. Cross-organizational alliances in the AI education space have a mixed track record but the scope here is large enough to warrant attention.

Economic mobility: agriculture plus workforce

The economic mobility section has two distinct tracks. The first focuses on smallholder agriculture, which is the income source for nearly two billion people globally. The plan is to make agriculture-specific improvements to Claude, build datasets of local crops, and develop benchmarks for agricultural applications. These tools will be released as public goods.

The second track is US-focused workforce mobility. Three workstreams here. Portable records of skills and certifications that move with workers across schools and jobs. Trustworthy career guidance for new entrants and those retraining. Tools that link training program data to employment outcomes so the field can measure which interventions actually improve job and wage outcomes.

The measurement piece is what stands out for me. Workforce development programs in the US have struggled to prove impact because outcome data has been fragmented across systems. Building tools that connect training inputs to employment outputs is exactly the kind of infrastructure work that could meaningfully improve the field.

Why this matters in the broader AI landscape

Most large AI labs talk about beneficial AI in their mission statements. Few have committed structural resources to it on this scale. The Anthropic Gates Foundation partnership stands out because the commitment is multi-year, programmatic, and aimed at categories where markets won’t naturally allocate AI investment.

Pharma companies don’t prioritize HPV vaccines for low-income countries. Edtech companies don’t prioritize foundational literacy in rural sub-Saharan Africa. Agricultural AI startups don’t focus on smallholder farmers because the unit economics don’t work. These are exactly the gaps the partnership targets.

Whether the deployment actually moves the needle depends on execution. AI for good has a long history of well-intentioned programs that didn’t translate to measurable impact. The Anthropic structure, with a dedicated Beneficial Deployments team and a four-year time horizon, gives this partnership a better chance than most.

What I’d watch for next

A few questions worth tracking as the partnership rolls out.

What benchmarks does Anthropic publish for healthcare AI? The promised evaluation frameworks could become important infrastructure for the broader field. If they’re rigorous and openly available, other labs will use them too. That’s the kind of public good that compounds.

How do the polio, HPV, and preeclampsia drug discovery efforts play out? Computational vaccine screening has been an active research direction for years. Real wins here would be visible within 18-24 months in terms of shortened pre-clinical timelines. That’s a meaningful test of whether AI is actually accelerating biology or just running parallel.

Does the workforce mobility data infrastructure get adopted? Connecting training programs to employment outcomes has been a holy grail for US workforce development for two decades. If Anthropic and the Gates Foundation can build the data plumbing that lets researchers measure intervention effectiveness, that’s a category-defining contribution.

The bigger picture

Anthropic’s commitment to publish thinking and decision-making as the partnership progresses is worth noting. Most beneficial AI announcements stay opaque after the launch press release. Public documentation of how programs are scoped, how impact is measured, and what works versus what doesn’t would be genuinely valuable for the field.

For anyone tracking how frontier AI labs are positioning themselves on social impact, this partnership sets a higher bar. $200 million across four years with named programs, named diseases, named regions, and a dedicated execution team. That’s a different posture than the typical AI for good announcement. Worth watching to see if execution lives up to the framing.